2026-04-03 09:30:34

Interesting for a sense of how much research is missing from 'global' platforms, based on where and what language it was published in. Focuses on Moldova, Ukraine, Georgia, Germany. LSE Impact blog post: https://

2026-04-08 00:44:31

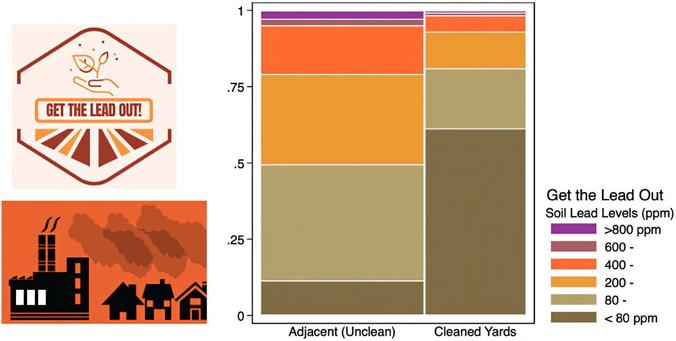

Study finds 70% of remediated Los Angeles yards still exceed lead limit #LosAngeles

2026-01-22 16:28:34

Fighting attacks on knowledge requires understanding the terrain! Register for this first #DefendResearch webinar to hear from PEN America about their new report “America’s Censored Campuses” analyzing #censorship within research and education.

2026-03-24 07:38:32

Democratizing AI: A Comparative Study in Deep Learning Efficiency and Future Trends in Computational Processing

Lisan Al Amin, Md Ismail Hossain, Rupak Kumar Das, Mahbubul Islam, Saddam Mukta, Abdulaziz Tabbakh

https://arxiv.org/abs/2603.20920 https://arxiv.org/pdf/2603.20920 https://arxiv.org/html/2603.20920

arXiv:2603.20920v1 Announce Type: new

Abstract: The exponential growth in data has intensified the demand for computational power to train large-scale deep learning models. However, the rapid growth in model size and complexity raises concerns about equal and fair access to computational resources, particularly under increasing energy and infrastructure constraints. GPUs have emerged as essential for accelerating such workloads. This study benchmarks four deep learning models (Conv6, VGG16, ResNet18, CycleGAN) using TensorFlow and PyTorch on Intel Xeon CPUs and NVIDIA Tesla T4 GPUs. Our experiments demonstrate that, on average, GPU training achieves speedups ranging from 11x to 246x depending on model complexity, with lightweight models (Conv6) showing the highest acceleration (246x), mid-sized models (VGG16, ResNet18) achieving 51-116x speedups, and complex generative models (CycleGAN) reaching 11x improvements compared to CPU training. Additionally, in our PyTorch vs. TensorFlow comparison, we observed that TensorFlow's kernel-fusion optimizations reduce inference latency by approximately 15%. We also analyze GPU memory usage trends and projecting requirements through 2025 using polynomial regression. Our findings highlight that while GPUs are essential for sustaining AI's growth, democratized and shared access to GPU resources is critical for enabling research innovation across institutions with limited computational budgets.

toXiv_bot_toot

2026-02-25 10:38:51

Hierarchic-EEG2Text: Assessing EEG-To-Text Decoding across Hierarchical Abstraction Levels

Anupam Sharma, Harish Katti, Prajwal Singh, Shanmuganathan Raman, Krishna Miyapuram

https://arxiv.org/abs/2602.20932 https://arxiv.org/pdf/2602.20932 https://arxiv.org/html/2602.20932

arXiv:2602.20932v1 Announce Type: new

Abstract: An electroencephalogram (EEG) records the spatially averaged electrical activity of neurons in the brain, measured from the human scalp. Prior studies have explored EEG-based classification of objects or concepts, often for passive viewing of briefly presented image or video stimuli, with limited classes. Because EEG exhibits a low signal-to-noise ratio, recognizing fine-grained representations across a large number of classes remains challenging; however, abstract-level object representations may exist. In this work, we investigate whether EEG captures object representations across multiple hierarchical levels, and propose episodic analysis, in which a Machine Learning (ML) model is evaluated across various, yet related, classification tasks (episodes). Unlike prior episodic EEG studies that rely on fixed or randomly sampled classes of equal cardinality, we adopt hierarchy-aware episode sampling using WordNet to generate episodes with variable classes of diverse hierarchy. We also present the largest episodic framework in the EEG domain for detecting observed text from EEG signals in the PEERS dataset, comprising $931538$ EEG samples under $1610$ object labels, acquired from $264$ human participants (subjects) performing controlled cognitive tasks, enabling the study of neural dynamics underlying perception, decision-making, and performance monitoring.

We examine how the semantic abstraction level affects classification performance across multiple learning techniques and architectures, providing a comprehensive analysis. The models tend to improve performance when the classification categories are drawn from higher levels of the hierarchy, suggesting sensitivity to abstraction. Our work highlights abstraction depth as an underexplored dimension of EEG decoding and motivates future research in this direction.

toXiv_bot_toot