2025-12-31 17:50:39

Sources: Uber is in talks to acquire the parking space reservation app SpotHero; the parking app was last valued at $290M (The Information)

https://www.theinformation.com/articles/uber-considers-deal-parking-app-spothero

2025-10-31 21:00:04

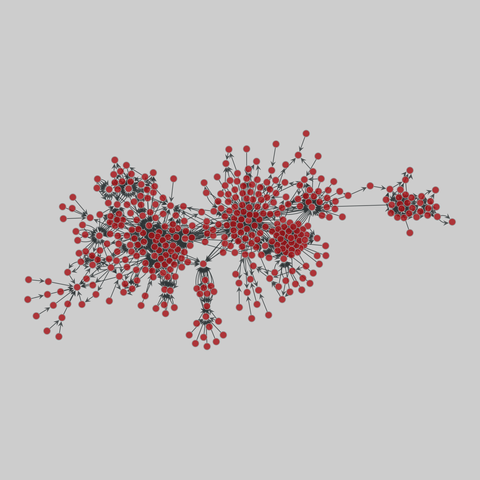

software_dependencies: Software dependencies (2010)

Several networks of software dependencies. Nodes represent libraries and a directed edge denotes a library dependency on another.

This network has 532 nodes and 1730 edges.

Tags: Technological, Software, Unweighted

https://networks.skewed.de/n…

2025-10-31 17:17:32

Happy Halloween! Orbweaver spider. Mary and Joseph Retreat Center, Rancho Palos Verdes, California, USA. August, 2024. #halloween #spider #orbweaver

2025-12-30 16:01:00

PV-Speicherbatterien: Gericht stärkt Herstellern bei Fernabschaltung den Rücken

Werden Photovoltaik-Speicher per Software gedrosselt, müssen Kunden das laut einem Landgericht trotz Leistungsversprechen als Sicherheitsmaßnahme akzeptieren.

2025-10-30 15:54:53

🇺🇦 #NowPlaying on KEXP's #MorningShow

KAYTRANADA:

🎵 SPACE INVADER

#KAYTRANADA

https://sbtrkt.bandcamp.com/track/space-invader-sbtrkt-remix

https://open.spotify.com/track/3fPW4EhpRR6BwLRPDThNeg

2025-10-31 12:59:38

2025-12-29 12:00:04

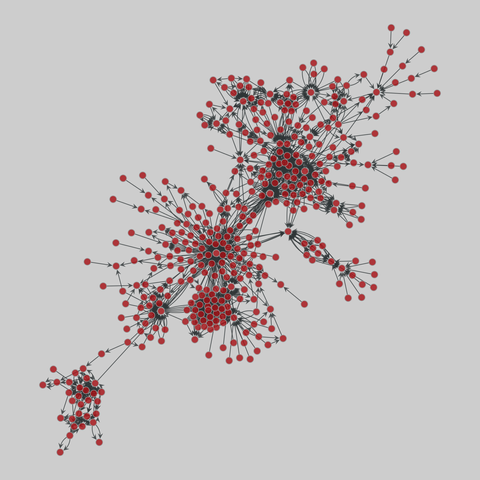

software_dependencies: Software dependencies (2010)

Several networks of software dependencies. Nodes represent libraries and a directed edge denotes a library dependency on another.

This network has 533 nodes and 1735 edges.

Tags: Technological, Software, Unweighted

https://networks.skewed.de/…

2025-10-30 11:18:00

Samsung macht Kühlschrank-Displays zur Werbefläche

Samsung bringt ein Software-Update für smarte Edel-Kühlschränke. Dazu gehört ein Bildschirmschoner, der auch Werbung anzeigt, vorerst allerdings nur in den USA.

https:…

2025-11-29 13:13:00

Airbus A320-Check: Kaum Auswirkungen auf den Flugverkehr

Trotz eines unerwartet nötigen Software-Updates bei vielen Airbus A320-Maschinen bleibt der Flugverkehr stabil. Vereinzelt kann es zu Verspätungen kommen.

htt…

2025-11-30 06:49:00

Airbus-Update nach Zwischenfall – Airlines reagieren rasch

Plötzlicher Höhenverlust, Software-Alarm und schnelle Updates: Airlines und Behörden mussten nach einem Airbus-Vorfall schnell reagieren.

https://www.