2025-12-28 00:51:29

Just a reminder, if you miss #HashTagGames when it is new and live, games play for days.

Also, people play as the time zones change and not just in the first few hours. Feel free to play whenever you can. We get several spurts of new game play rushes throughout the day.

Occasionally, we still get really old games that popup with new game plays.

See you in just over an hou…

2025-10-24 07:12:50

Hi everyone, I just wanted to say thank you again for your amazing support for our fundraiser to fund @…’s private scholarship at the University of Milan and get her evacuated from Gaza in November.

Thanks to you, we were able to raise over $15,000 in under 24 hours and meet our goal.

Joy can’t thank you herself at the moment as her family has mov…

2025-12-25 18:50:06

Rumors flew in the hours after a shooting at Brown University killed two students on Dec. 13.

One falsehood had it that one of the victims, a leader of the college Republican Club, was “targeted for her conservative beliefs, hunted, and killed in cold blood.”

Another was that it had been a terrorist attack, a claim that made the rounds when a Palestinian student was identified as a possible suspect two days later and hounded on the internet.

⭐️A churn of disinformation after…

2025-11-28 17:46:54

Here's who Cowboys should root for in Eagles-Bears, other Wk 13 games https://cowboyswire.usatoday.com/story/sports/nfl/cowboys/2025/11/28/week-13-rooting-guide-heres-who-can-help-cowboys-playoff…

2025-11-23 09:12:20

call me crazy but I don't think competitive sports should have moneyback guarantees. if you only support your club when you're winning you should just stick to watching sports films

#fedifc

2025-11-24 01:00:04

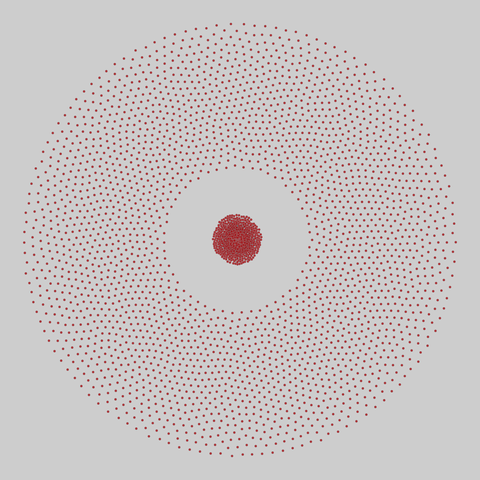

faculty_hiring_us: Faculty hiring networks in the US (2022)

Networks of faculty hiring for all PhD-granting US universities over the decade 2011–2020. Each node is a PhD-granting institution, and a directed edge (i,j) indicates that a person received their PhD from node i and was tenure-track faculty at node j during time of collection (2011-2020). This dataset is divided into separate networks for all 107 fields, as well as aggregate networks for 8 domains, and an overall network for …

2025-11-03 10:16:54

Adding another post. This one is a bit less polished, but I want to get it out. As things get harder for everyone, I'm seeing a greater tendency to want to grasp onto revolutionary fiction such as #Andor. I think there's value in that, but it has to come with an informed critique.

> We are so thirsty for hope that we will drink it up, even when that hope comes from a fiction and the truth behind the hope is poison. In Andor, we see the worst elements sacrifice themselves for some of the best. The revolution goes through a process of purification, the complicated elements weeding themselves out to make room for the simplified good, as the rebellion unifies. In reality, this tends to be the opposite how things actually work.

> [...]

> [The Urban Guerilla movement of the 60's through the 80's] centered militant revolution. In doing so, they omitted or cut themselves off from the logistic support needed to sustain such revolutionary activity. The trauma of carrying out violence further isolated and radicalized them. Lacking infrastructure for trauma healing, their decay escalated and became unrecoverable. Ultimately, their revolutionary movements both emulated and reinforced the status quo they were trying to resist.

> There emerges a strange historical parallel that is difficult to see from within the dominant paradigm. The competitive politics of electoralism derives from heroic competition, where people (typically men) compete (often violently) for control over a territory or people. Thus the insurrectionary enters into the very same competition as a challenger, not against the system of domination but for control over it. The success of the revolution, then, does not abolish the system of violent domination but changes rather replaces its management.

> Many modern anarchists will be quick to point out the disconnect between ends and means. While authoritarian projects often assert that "the ends justify the means," and Andor implies the same, anti-authoritarian projects assert the ends and the means are not only united but are, in fact, the same.

This is still very much something I'm actively editing, but I'd still love feedback to help me refine it to it's final form. Typo catches and clarifying questions welcome.

#USPol

2025-11-26 07:06:35

Dr. Ralph Abraham has spoken against Covid shots and ended mass vaccination campaigns.

Now he's in a position to make national health decisions.

https://www.nbcnews.com/health/health-news/controversial-louisi…

2025-12-23 12:56:38

NFL Delivers Harsh Punishment for Hit That Injured Cowboys WR https://heavy.com/sports/nfl/dallas-cowboys/nfl-delivers-harsh-punishment-ryan-flournoy/

2025-10-20 05:35:56

My name is Heather, and I was one of the very first hires for Barack Obama’s campaign.

I know how to win the tough fights — because I’ve lived them.

Now I’m leading North Star PAC to stop billionaire Jeffrey Yass and the MAGA machine from buying Pennsylvania’s courts.

If they succeed in the state’s Supreme Court retention election, they’ll rig the maps and lock us out of power for decades — just like they did in Texas.

But I can’t do this without you. While Yass and…