2026-01-20 11:49:44

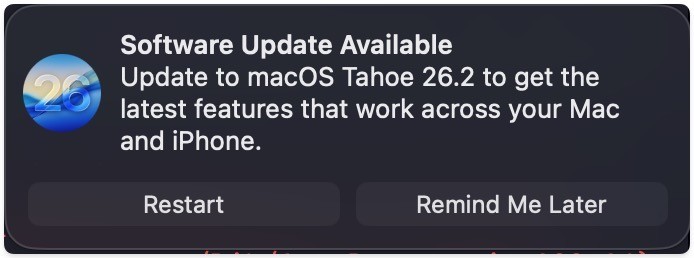

Fuck, no, Apple. I was forced to update to iOS 26 due to lack of security updates for 18 on devices that can run 26 (i.e., due to extortion) but you’re not infecting my Mac with Liquid Ass.

Notice the dark pattern of pestering you in hopes you will accidentally hit the “Restart” button even though you’ve specifically turned off auto updates. Microsoft-level disrespect for consent here.

(Not surprising from a company that gives golden awards to fascists.)

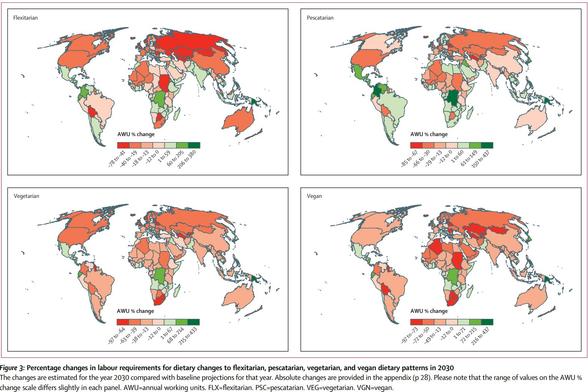

2025-12-21 00:30:16

City Random Manners ✴️

城市随机行为 ✴️

📷 Pentax MX

🎞️ Ilford Pan 100

#filmphotography #Photography #blackandwhite

2025-11-21 20:46:53

Once limited to policing the nation’s boundaries,

the Border Patrol has built a surveillance system stretching into the country’s interior

that can monitor ordinary Americans’ daily actions and connections for anomalies instead of simply targeting wanted suspects.

The U.S. Border Patrol is monitoring millions of American drivers nationwide in a secretive program to identify and detain people whose travel patterns it deems suspicious, The Associated Press has found.

The …

2025-11-22 00:30:01

Moody Urbanity - ONE 1️⃣

情绪化城市 - 壹 1️⃣

📷 Nikon FE

🎞️ Fujifilm NEOPAN SS, expired 1993

#filmphotography #Photography #blackandwhite

2025-11-19 12:28:00

I found a nice big projection screen in our new pattern club studio that wasn't retracting, the plastic end caps had worn/broken inside. I emailed the manufacturer on the offchance and they were happy to find some replacements that they sent out for the cost of postage. Now it works perfectly! Full credit to Sapphire projection screens for opting out of planned obsolescence

2025-12-21 18:58:11

We’re at the Detroit Institute of Arts Film Theater to see “La Grazia,” the new Paolo Sorrentino movie.

2025-11-15 10:55:25

Have a joyful #DayOfDionysos here at Erotic Mythology! 🍇

"After Dionysos had demonstrated to the Thebans that he was a god, he went to Argos where again he drove the women mad when the people did not pay him honour, and up in the mountains the women fed on the flesh of the babies suckling at their breasts."

Pseudo-Apollodorus, Bibliotheca 2.37

🏛 Dionysos Mosaic, …

2025-11-19 16:06:28

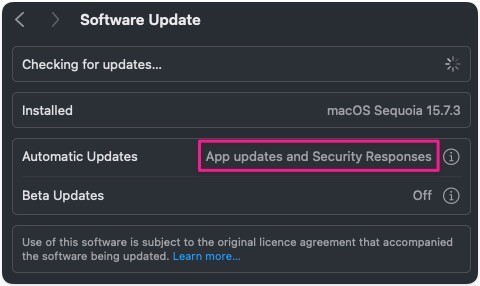

🫛 Global move towards plant-based diets could reshape farming jobs and reduce labor costs worldwide

#food

2026-01-19 00:40:02

US CHILDREN 🧒

我們這些孩童 🧒

📷 Zeiss IKON Super Ikonta 533/16

🎞️ Lucky SHD 400

#filmphotography #Photography #blackandwhite

2026-01-13 15:40:07

As Trump threatens Iran, Venezuela, Mexico, Greenland and more,

renowned historian Alfred McCoy says the United States is

“an empire in decline,”

following a predictable pattern

of militarism abroad and political instability at home

as it loses power and influence on the world stage.

“American politics become increasingly contorted and irrational,”

says McCoy.

“I think the thing to do is to realize that we are an empire in decline, …

…