2026-05-13 23:01:51

Geologists on the silver screen—the sequel: #movies, too ...

2026-05-13 17:20:45

Sources: CBS News anchor Tony Dokoupil is broadcasting from Taipei this week after failing to obtain a Chinese visa in time for the Trump-Xi summit in Beijing (Max Tani/Semafor)

https://www.semafor.com/article/05/13/2026

2026-04-14 14:22:42

So to follow up on this, I've caught it in action. Models, when quantized a bit, just do a bit more poorly with short contexts. Even going from f32 (as trained) to bf16 (as usually run) to q8 tends to do okay for "normal" context windows. And q4 you start feeling like "this model is a little stupid and gets stuck sometimes” (it is! It's just that it's still mostly careening about in the space of "plausible" most of the time. Not good guesswork, but still in the zone). With long contexts, the probability of parameters collapsing to zero are higher, so the more context the more likelihood you are to see brokenness.

And then at Q2 (2 bits per parameter) or Q1, the model falls apart completely. Parameters collapse to zero easily. You start seeing "all work and no play makes jack a dull boy” sorts of behavior, with intense and unscrutinized repetition, followed by a hard stop when it just stops working.

And quantization is a parameter that a model vendor can turn relatively easily. (they have to regenerate the model from the base with more quantization, but it's a data transformation on the order of running a terabyte through a straightforward and fast process, not like training).

If you have 1000 customers and enough equipment to handle the requests of 700, going from bf16 to q8 is a no-brainer. Suddenly you can handle the load and have a little spare capacity. They get worse results, probably pay the same per token (or they're on a subscription that hides the cost anyway so you are even freer to make trade-offs. There's a reason that subscription products are kinda poorly described.)

It's also possible for them to vary this across a day: use models during quieter periods? Maybe you get an instance running a bf16 quantization. If you use it during a high use period? You get a Q4 model.

Or intelligent routing is possible. No idea if anyone is doing this, but if they monitor what you send a bit, and you generally shoot for an expensive model for simple requests? They could totally substitute a highly quantized version of the model to answer the question.

There are •so many tricks• that can be pulled here. Some of them very reasonable to make, some of them treading into outright misleading or fraudulent, and it's weirdly hard to draw the line between them.

2026-03-13 16:38:45

2026-05-13 04:41:04

Heute 9 Uhr startet das Heart Times Bike Race in die zweite Ausgabe.

#DotWatching : https://www.followmychallenge.com/live/heart-times-2026/

2026-02-14 19:38:18

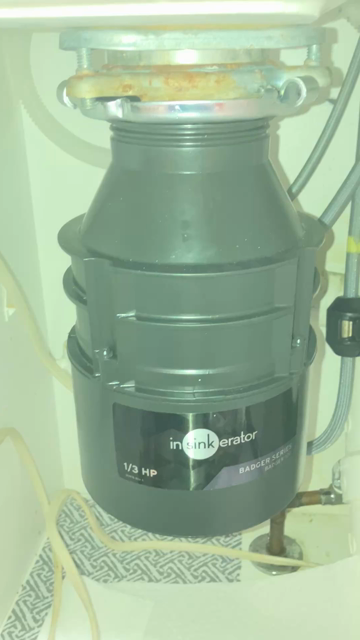

Happily it was a straightforward swap of identical models. Didn’t have to replace the sink flange as a result. It was an awkward business of hefting it up to get it aligned and locked into the flange, but it took only a mild amount of swearing to finally get it right.🤬

No leaks. Orders have been given to unfurl the mission accomplished banner.

(The wiring under this sink could use some taming. Whoever did it was certainly not paid by the hour. 🙄 )

2026-05-13 17:42:48

Wir waren gestern Abend in #Ingelheim im Kino. Im Rahmen der Filmauslese gab es Jim Jarmuschs „Father Mother Sister Brother“ zu sehen. Mir hat es gut gefallen. Die Karten konnten wir vorab mit #wero bezahlen. #filmnase

2026-05-14 11:06:55

In her introduction to an event on climate communication, initiator Mare de Wit quoted a man in the movie La Haine.

While falling from a tall building, he kept saying "Jusqu'ici, tout va bien."

So far, so good. A metaphor for climate change, for those of us who weren't hit hard by it yet.

2026-02-14 16:16:05

2026-05-14 07:05:38

H-Net Job Guide Weekly Report for H-Scholar: 3 May - 10 May

https://ift.tt/vEwxm5M

Third Symposium on Dance, Music, and Performing Arts of the Middle East, North Africa, Central Asia,…

via Input 4 RELCFP