2026-03-31 11:12:48

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[2/5]:

- POTSA: A Cross-Lingual Speech Alignment Framework for Speech-to-Text Translation

Li, Cui, Wang, Ge, Huang, Li, Peng, Lu, Tashi, Wang, Dang

https://arxiv.org/abs/2511.09232 https://mastoxiv.page/@arXiv_csCL_bot/115541846907664054

- Beyond Elicitation: Provision-based Prompt Optimization for Knowledge-Intensive Tasks

Yunzhe Xu, Zhuosheng Zhang, Zhe Liu

https://arxiv.org/abs/2511.10465 https://mastoxiv.page/@arXiv_csCL_bot/115547607561282911

- $\pi$-Attention: Periodic Sparse Transformers for Efficient Long-Context Modeling

Dong Liu, Yanxuan Yu

https://arxiv.org/abs/2511.10696 https://mastoxiv.page/@arXiv_csCL_bot/115564418836654965

- Based on Data Balancing and Model Improvement for Multi-Label Sentiment Classification Performanc...

Zijin Su, Huanzhu Lyu, Yuren Niu, Yiming Liu

https://arxiv.org/abs/2511.14073 https://mastoxiv.page/@arXiv_csCL_bot/115575715073023141

- HEAD-QA v2: Expanding a Healthcare Benchmark for Reasoning

Alexis Correa-Guill\'en, Carlos G\'omez-Rodr\'iguez, David Vilares

https://arxiv.org/abs/2511.15355 https://mastoxiv.page/@arXiv_csCL_bot/115581410328165116

- Towards Hyper-Efficient RAG Systems in VecDBs: Distributed Parallel Multi-Resolution Vector Search

Dong Liu, Yanxuan Yu

https://arxiv.org/abs/2511.16681 https://mastoxiv.page/@arXiv_csCL_bot/115603508442305146

- Estonian WinoGrande Dataset: Comparative Analysis of LLM Performance on Human and Machine Transla...

Marii Ojastu, Hele-Andra Kuulmets, Aleksei Dorkin, Marika Borovikova, Dage S\"arg, Kairit Sirts

https://arxiv.org/abs/2511.17290 https://mastoxiv.page/@arXiv_csCL_bot/115604083224487885

- A Systematic Study of In-the-Wild Model Merging for Large Language Models

O\u{g}uz Ka\u{g}an Hitit, Leander Girrbach, Zeynep Akata

https://arxiv.org/abs/2511.21437 https://mastoxiv.page/@arXiv_csCL_bot/115621178703846052

- CREST: Universal Safety Guardrails Through Cluster-Guided Cross-Lingual Transfer

Lavish Bansal, Naman Mishra

https://arxiv.org/abs/2512.02711 https://mastoxiv.page/@arXiv_csCL_bot/115655090475535157

- Multilingual Medical Reasoning for Question Answering with Large Language Models

Pietro Ferrazzi, Aitor Soroa, Rodrigo Agerri

https://arxiv.org/abs/2512.05658 https://mastoxiv.page/@arXiv_csCL_bot/115683267711014189

- OnCoCo 1.0: A Public Dataset for Fine-Grained Message Classification in Online Counseling Convers...

Albrecht, Lehmann, Poltermann, Rudolph, Steigerwald, Stieler

https://arxiv.org/abs/2512.09804 https://mastoxiv.page/@arXiv_csCL_bot/115700409397020978

- Does Tone Change the Answer? Evaluating Prompt Politeness Effects on Modern LLMs: GPT, Gemini, an...

Hanyu Cai, Binqi Shen, Lier Jin, Lan Hu, Xiaojing Fan

https://arxiv.org/abs/2512.12812 https://mastoxiv.page/@arXiv_csCL_bot/115729149622659403

- Beg to Differ: Understanding Reasoning-Answer Misalignment Across Languages

Ovalle, Ross, Ruder, Williams, Ullrich, Ibrahim, Sagun

https://arxiv.org/abs/2512.22712 https://mastoxiv.page/@arXiv_csCL_bot/115808161882146194

- Activation Steering for Masked Diffusion Language Models

Adi Shnaidman, Erin Feiglin, Osher Yaari, Efrat Mentel, Amit Levi, Raz Lapid

https://arxiv.org/abs/2512.24143 https://mastoxiv.page/@arXiv_csCL_bot/115819533211103315

- JMedEthicBench: A Multi-Turn Conversational Benchmark for Evaluating Medical Safety in Japanese L...

Liu, Li, Niu, Zhang, Xun, Hou, Wang, Iwasawa, Matsuo, Hatakeyama-Sato

https://arxiv.org/abs/2601.01627 https://mastoxiv.page/@arXiv_csCL_bot/115847901607405421

- FACTUM: Mechanistic Detection of Citation Hallucination in Long-Form RAG

Dassen, Kotula, Murray, Yates, Lawrie, Kayi, Mayfield, Duh

https://arxiv.org/abs/2601.05866 https://mastoxiv.page/@arXiv_csCL_bot/115881545684182376

- {\dag}DAGGER: Distractor-Aware Graph Generation for Executable Reasoning in Math Problems

Zabir Al Nazi, Shubhashis Roy Dipta, Sudipta Kar

https://arxiv.org/abs/2601.06853 https://mastoxiv.page/@arXiv_csCL_bot/115887753245730019

- Symphonym: Universal Phonetic Embeddings for Cross-Script Name Matching

Stephen Gadd

https://arxiv.org/abs/2601.06932 https://mastoxiv.page/@arXiv_csCL_bot/115887767008671765

- LLMs versus the Halting Problem: Revisiting Program Termination Prediction

Sultan, Armengol-Estape, Kesseli, Vanegue, Shahaf, Adi, O'Hearn

https://arxiv.org/abs/2601.18987 https://mastoxiv.page/@arXiv_csCL_bot/115972010510378715

- MuVaC: A Variational Causal Framework for Multimodal Sarcasm Understanding in Dialogues

Diandian Guo, Fangfang Yuan, Cong Cao, Xixun Lin, Chuan Zhou, Hao Peng, Yanan Cao, Yanbing Liu

https://arxiv.org/abs/2601.20451 https://mastoxiv.page/@arXiv_csCL_bot/115977891530875024

toXiv_bot_toot

2026-03-03 08:45:46

Military officials question fortifications at site where U.S. troops were killed in Iranian strike (James LaPorta/CBS News)

https://www.cbsnews.com/news/iran-strike-kuwait-officials-question-fortifications/

http://www.memeorandum.com/260303/p3#a260303p3

2026-03-31 11:13:03

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[4/5]:

- Retrieving Climate Change Disinformation by Narrative

Upravitelev, Solopova, Jakob, Sahitaj, M\"oller, Schmitt

https://arxiv.org/abs/2603.22015 https://mastoxiv.page/@arXiv_csCL_bot/116283633674519408

- PaperVoyager : Building Interactive Web with Visual Language Models

Dasen Dai, Biao Wu, Meng Fang, Wenhao Wang

https://arxiv.org/abs/2603.22999 https://mastoxiv.page/@arXiv_csCL_bot/116289015432093128

- Continual Robot Skill and Task Learning via Dialogue

Weiwei Gu, Suresh Kondepudi, Anmol Gupta, Lixiao Huang, Nakul Gopalan

https://arxiv.org/abs/2409.03166 https://mastoxiv.page/@arXiv_csRO_bot/113089412115632702

- Shifting Perspectives: Steering Vectors for Robust Bias Mitigation in LLMs

Zara Siddique, Irtaza Khalid, Liam D. Turner, Luis Espinosa-Anke

https://arxiv.org/abs/2503.05371 https://mastoxiv.page/@arXiv_csLG_bot/114136994263573386

- SkillFlow: Scalable and Efficient Agent Skill Retrieval System

Fangzhou Li, Pagkratios Tagkopoulos, Ilias Tagkopoulos

https://arxiv.org/abs/2504.06188 https://mastoxiv.page/@arXiv_csAI_bot/114306773220502860

- Large Language Models for Computer-Aided Design: A Survey

Licheng Zhang, Bach Le, Naveed Akhtar, Siew-Kei Lam, Tuan Ngo

https://arxiv.org/abs/2505.08137 https://mastoxiv.page/@arXiv_csLG_bot/114504972217393639

- Structured Agent Distillation for Large Language Model

Liu, Kong, Dong, Yang, Li, Tang, Yuan, Niu, Zhang, Zhao, Lin, Huang, Wang

https://arxiv.org/abs/2505.13820 https://mastoxiv.page/@arXiv_csLG_bot/114544636506163783

- VLM-3R: Vision-Language Models Augmented with Instruction-Aligned 3D Reconstruction

Fan, Zhang, Li, Zhang, Chen, Hu, Wang, Qu, Zhou, Wang, Yan, Xu, Theiss, Chen, Li, Tu, Wang, Ranjan

https://arxiv.org/abs/2505.20279 https://mastoxiv.page/@arXiv_csCV_bot/114578817567171199

- Learning to Diagnose Privately: DP-Powered LLMs for Radiology Report Classification

Bhattacharjee, Tian, Rubin, Lo, Merchant, Hanson, Gounley, Tandon

https://arxiv.org/abs/2506.04450 https://mastoxiv.page/@arXiv_csCR_bot/114635189706505648

- L-MARS: Legal Multi-Agent Workflow with Orchestrated Reasoning and Agentic Search

Ziqi Wang, Boqin Yuan

https://arxiv.org/abs/2509.00761 https://mastoxiv.page/@arXiv_csAI_bot/115140304787881576

- Your Models Have Thought Enough: Training Large Reasoning Models to Stop Overthinking

Han, Huang, Liao, Jiang, Lu, Zhao, Wang, Zhou, Jiang, Liang, Zhou, Sun, Yu, Xiao

https://arxiv.org/abs/2509.23392 https://mastoxiv.page/@arXiv_csAI_bot/115293169353788311

- Person-Centric Annotations of LAION-400M: Auditing Bias and Its Transfer to Models

Leander Girrbach, Stephan Alaniz, Genevieve Smith, Trevor Darrell, Zeynep Akata

https://arxiv.org/abs/2510.03721 https://mastoxiv.page/@arXiv_csCV_bot/115332690912652473

- Agentic Context Engineering: Evolving Contexts for Self-Improving Language Models

Zhang, Hu, Upasani, Ma, Hong, Kamanuru, Rainton, Wu, Ji, Li, Thakker, Zou, Olukotun

https://arxiv.org/abs/2510.04618 https://mastoxiv.page/@arXiv_csLG_bot/115332999596603375

- Mitigating Premature Exploitation in Particle-based Monte Carlo for Inference-Time Scaling

Giannone, Xu, Nayak, Awhad, Sudalairaj, Xu, Srivastava

https://arxiv.org/abs/2510.05825 https://mastoxiv.page/@arXiv_csLG_bot/115338159696513898

- Complete asymptotic type-token relationship for growing complex systems with inverse power-law co...

Pablo Rosillo-Rodes, Laurent H\'ebert-Dufresne, Peter Sheridan Dodds

https://arxiv.org/abs/2511.02069 https://mastoxiv.page/@arXiv_physicssocph_bot/115496283627867809

- ViPRA: Video Prediction for Robot Actions

Sandeep Routray, Hengkai Pan, Unnat Jain, Shikhar Bahl, Deepak Pathak

https://arxiv.org/abs/2511.07732 https://mastoxiv.page/@arXiv_csRO_bot/115535941444003568

- AISAC: An Integrated multi-agent System for Transparent, Retrieval-Grounded Scientific Assistance

Chandrachur Bhattacharya, Sibendu Som

https://arxiv.org/abs/2511.14043

- VideoARM: Agentic Reasoning over Hierarchical Memory for Long-Form Video Understanding

Yufei Yin, Qianke Meng, Minghao Chen, Jiajun Ding, Zhenwei Shao, Zhou Yu

https://arxiv.org/abs/2512.12360 https://mastoxiv.page/@arXiv_csCV_bot/115729238732682644

- RadImageNet-VQA: A Large-Scale CT and MRI Dataset for Radiologic Visual Question Answering

L\'eo Butsanets, Charles Corbi\`ere, Julien Khlaut, Pierre Manceron, Corentin Dancette

https://arxiv.org/abs/2512.17396 https://mastoxiv.page/@arXiv_csCV_bot/115762705911757243

- Measuring all the noises of LLM Evals

Sida Wang

https://arxiv.org/abs/2512.21326 https://mastoxiv.page/@arXiv_csLG_bot/115779597137785637

toXiv_bot_toot

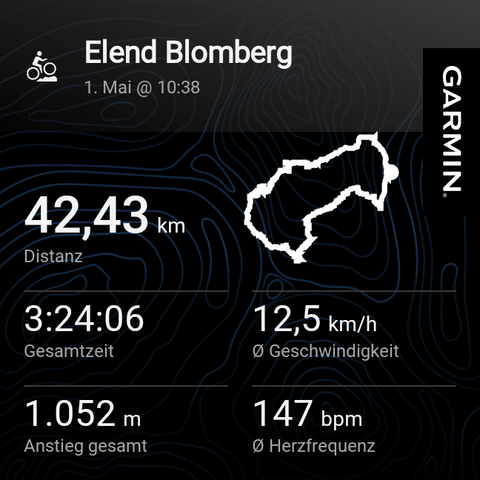

2026-05-01 18:28:45

This was a nice ride. Some sections involved pushing the bike. And some steep parts came a bit surprising. But overall it was nice with some quite challenging sections. It was a loop that I wanted to try since quite a while.

And it's a new elevation record since I own the Garmin 🎉

Just my #garmin now suddenly refuses to connect to my phone. 😵💫 Factory reset, all suggestions fro…

2026-03-31 11:12:53

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[3/5]:

- Can Small Language Models Handle Context-Summarized Multi-Turn Customer-Service QA? A Synthetic D...

Lakshan Cooray, Deshan Sumanathilaka, Pattigadapa Venkatesh Raju

https://arxiv.org/abs/2602.00665 https://mastoxiv.page/@arXiv_csCL_bot/116006686092324902

- SEAD: Self-Evolving Agent for Multi-Turn Service Dialogue

Dai, Gao, Zhang, Wang, Luo, Wang, Wang, Wu, Wang

https://arxiv.org/abs/2602.03548

- OmniRAG-Agent: Agentic Omnimodal Reasoning for Low-Resource Long Audio-Video Question Answering

Yifan Zhu, Xinyu Mu, Tao Feng, Zhonghong Ou, Yuning Gong, Haoran Luo

https://arxiv.org/abs/2602.03707

- GreekMMLU: A Native-Sourced Multitask Benchmark for Evaluating Language Models in Greek

Zhang, Konomi, Xypolopoulos, Divriotis, Skianis, Nikolentzos, Stamou, Shang, Vazirgiannis

https://arxiv.org/abs/2602.05150

- Using LLMs for Knowledge Component-level Correctness Labeling in Open-ended Coding Problems

Zhangqi Duan, Arnav Kankaria, Dhruv Kartik, Andrew Lan

https://arxiv.org/abs/2602.17542 https://mastoxiv.page/@arXiv_csCL_bot/116102514058414603

- MetaState: Persistent Working Memory Enhances Reasoning in Discrete Diffusion Language Models

Kejing Xia, Mingzhe Li, Lixuan Wei, Zhenbang Du, Xiangchi Yuan, Dachuan Shi, Qirui Jin, Wenke Lee

https://arxiv.org/abs/2603.01331 https://mastoxiv.page/@arXiv_csCL_bot/116165314672421581

- A Browser-based Open Source Assistant for Multimodal Content Verification

Milner, Foster, Karmakharm, Razuvayevskaya, Roberts, Porcellini, Teyssou, Bontcheva

https://arxiv.org/abs/2603.02842 https://mastoxiv.page/@arXiv_csCL_bot/116170368271004704

- Nw\=ach\=a Mun\=a: A Devanagari Speech Corpus and Proximal Transfer Benchmark for Nepal Bhasha ASR

Sharma, Shrestha, Poudel, Tiwari, Shrestha, Ghimire, Bal

https://arxiv.org/abs/2603.07554 https://mastoxiv.page/@arXiv_csCL_bot/116204797995674104

- Model Merging in the Era of Large Language Models: Methods, Applications, and Future Directions

Mingyang Song, Mao Zheng

https://arxiv.org/abs/2603.09938 https://mastoxiv.page/@arXiv_csCL_bot/116210189810004206

- AgentDrift: Unsafe Recommendation Drift Under Tool Corruption Hidden by Ranking Metrics in LLM Ag...

Zekun Wu, Adriano Koshiyama, Sahan Bulathwela, Maria Perez-Ortiz

https://arxiv.org/abs/2603.12564 https://mastoxiv.page/@arXiv_csCL_bot/116237800898328349

- GhanaNLP Parallel Corpora: Comprehensive Multilingual Resources for Low-Resource Ghanaian Languages

Gyamfi, Azunre, Moore, Budu, Asare, Owusu, Asiamah

https://arxiv.org/abs/2603.13793 https://mastoxiv.page/@arXiv_csCL_bot/116243544688031749

- sebis at ArchEHR-QA 2026: How Much Can You Do Locally? Evaluating Grounded EHR QA on a Single Not...

Ibrahim Ebrar Yurt, Fabian Karl, Tejaswi Choppa, Florian Matthes

https://arxiv.org/abs/2603.13962 https://mastoxiv.page/@arXiv_csCL_bot/116243646346563497

- ExPosST: Explicit Positioning with Adaptive Masking for LLM-Based Simultaneous Machine Translation

Yuzhe Shang, Pengzhi Gao, Yazheng Yang, Jiayao Ma, Wei Liu, Jian Luan, Jinsong Su

https://arxiv.org/abs/2603.14903 https://mastoxiv.page/@arXiv_csCL_bot/116243711232778054

- BanglaSocialBench: A Benchmark for Evaluating Sociopragmatic and Cultural Alignment of LLMs in Ba...

Tanvir Ahmed Sijan, S. M Golam Rifat, Pankaj Chowdhury Partha, Md. Tanjeed Islam, Md. Musfique Anwar

https://arxiv.org/abs/2603.15949 https://mastoxiv.page/@arXiv_csCL_bot/116249122231759766

- EngGPT2: Sovereign, Efficient and Open Intelligence

G. Ciarfaglia, et al.

https://arxiv.org/abs/2603.16430 https://mastoxiv.page/@arXiv_csCL_bot/116249228411487178

- HypeLoRA: Hyper-Network-Generated LoRA Adapters for Calibrated Language Model Fine-Tuning

Bartosz Trojan, Filip G\k{e}bala

https://arxiv.org/abs/2603.19278 https://mastoxiv.page/@arXiv_csCL_bot/116277612915482857

- Automatic Analysis of Collaboration Through Human Conversational Data Resources: A Review

Yi Yu, Maria Boritchev, Chlo\'e Clavel

https://arxiv.org/abs/2603.19292 https://mastoxiv.page/@arXiv_csCL_bot/116277620779254916

- Alignment Whack-a-Mole : Finetuning Activates Verbatim Recall of Copyrighted Books in Large Langu...

Xinyue Liu, Niloofar Mireshghallah, Jane C. Ginsburg, Tuhin Chakrabarty

https://arxiv.org/abs/2603.20957 https://mastoxiv.page/@arXiv_csCL_bot/116283538317671552

- KG-Hopper: Empowering Compact Open LLMs with Knowledge Graph Reasoning via Reinforcement Learning

Shuai Wang, Yinan Yu

https://arxiv.org/abs/2603.21440 https://mastoxiv.page/@arXiv_csCL_bot/116283595007808076

toXiv_bot_toot

2026-06-01 01:00:55

Trump health readout leaves key blanks unfilled (Axios)

https://www.axios.com/2026/05/31/trump-checkup-medical-questions-unanswered

http://www.memeorandum.com/260531/p49#a260531p49

2026-03-28 02:02:03

Internal figures show Business Insider's paid subscribers have consistently declined, from about 185K at the end of 2022 to 135K at the end of 2025, a 27% drop (Oliver Darcy/Status)

https://www.status.news/p/business-insider-subscription-decline-data

…

2026-04-28 18:28:15

2026-03-31 10:11:57

GraphWalker: Agentic Knowledge Graph Question Answering via Synthetic Trajectory Curriculum

Shuwen Xu, Yao Xu, Jiaxiang Liu, Chenhao Yuan, Wenshuo Peng, Jun Zhao, Kang Liu

https://arxiv.org/abs/2603.28533 https://arxiv.org/pdf/2603.28533 https://arxiv.org/html/2603.28533

arXiv:2603.28533v1 Announce Type: new

Abstract: Agentic knowledge graph question answering (KGQA) requires an agent to iteratively interact with knowledge graphs (KGs), posing challenges in both training data scarcity and reasoning generalization. Specifically, existing approaches often restrict agent exploration: prompting-based methods lack autonomous navigation training, while current training pipelines usually confine reasoning to predefined trajectories. To this end, this paper proposes \textit{GraphWalker}, a novel agentic KGQA framework that addresses these challenges through \textit{Automated Trajectory Synthesis} and \textit{Stage-wise Fine-tuning}. GraphWalker adopts a two-stage SFT training paradigm: First, the agent is trained on structurally diverse trajectories synthesized from constrained random-walk paths, establishing a broad exploration prior over the KG; Second, the agent is further fine-tuned on a small set of expert trajectories to develop reflection and error recovery capabilities. Extensive experiments demonstrate that our stage-wise SFT paradigm unlocks a higher performance ceiling for a lightweight reinforcement learning (RL) stage, enabling GraphWalker to achieve state-of-the-art performance on CWQ and WebQSP. Additional results on GrailQA and our constructed GraphWalkerBench confirm that GraphWalker enhances generalization to out-of-distribution reasoning paths. The code is publicly available at https://github.com/XuShuwenn/GraphWalker

toXiv_bot_toot

2026-03-31 10:09:47

Not All Subjectivity Is the Same! Defining Desiderata for the Evaluation of Subjectivity in NLP

Urja Khurana, Michiel van der Meer, Enrico Liscio, Antske Fokkens, Pradeep K. Murukannaiah

https://arxiv.org/abs/2603.28351 https://arxiv.org/pdf/2603.28351 https://arxiv.org/html/2603.28351

arXiv:2603.28351v1 Announce Type: new

Abstract: Subjective judgments are part of several NLP datasets and recent work is increasingly prioritizing models whose outputs reflect this diversity of perspectives. Such responses allow us to shed light on minority voices, which are frequently marginalized or obscured by dominant perspectives. It remains a question whether our evaluation practices align with these models' objectives. This position paper proposes seven evaluation desiderata for subjectivity-sensitive models, rooted in how subjectivity is represented in NLP data and models. The desiderata are constructed in a top-down approach, keeping in mind the user-centric impact of such models. We scan the experimental setup of 60 papers and show that various aspects of subjectivity are still understudied: the distinction between ambiguous and polyphonic input, whether subjectivity is effectively expressed to the user, and a lack of interplay between different desiderata, amongst other gaps.

toXiv_bot_toot