2026-01-24 12:08:08

2026-03-18 22:10:24

The US Federal Reserve held interest rates steady for the second time this year,

a widely expected move amid turmoil in the Middle East and rising energy prices.

Fed officials faced a confluence of issues to consider in their meeting this week:

soaring oil and gas prices,

fluctuating inflation that still remains above the Fed’s target of 2%,

and a weakened job market that unexpectedly saw 92,000 losses last month.

All but one of the 12 voting members of th…

2026-03-21 18:09:10

I know that following a hashtag is part of the Mastodon protocol itself, but since I'm in @… the majority of the time - it feels like it would be cool to:

- Offer a "follow for a week" / month option

- "We'll ask you when time's up"

mechanism (maybe the ask part is configurable? maybe the client could also just handle it when next it runs?)

I hit this time and time again for moment in time events where during the thick of it, I'm invested and interested - conferences, world news, etc - but after which there's no more signal in the hashtag and it's just detritus putting load on a server somewhere.

Ideally this is something that should be in the protocol itself, but it feels like similar to quote replies that @… could bring a better user experience in advance of adoption of something like that

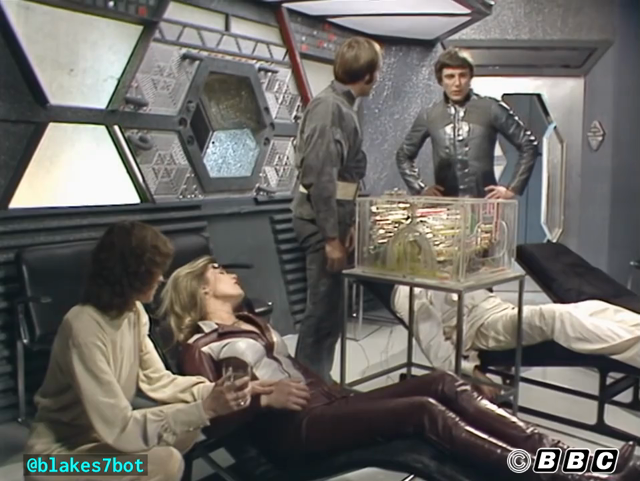

2026-02-22 16:28:57

B10 - Voice from the Past

ORAC: Requires minimum of two hours eradication therapy. Maximum five minute treatment periods interspersed with one hour rest periods. Hence, minimum rehabilitation period of twenty-six hours.

JENNA: [Sighs] Oh, no.

https://blake.torpidity.net/m/210/159 B7B4

2025-12-30 18:36:33

NFL MVP odds: Drake Maye favored after Matthew Stafford's MNF bust https://www.nytimes.com/athletic/6929726/2025/12/30/nfl-mvp-odds-drake-maye-matthew-stafford-mnf/

2026-01-09 21:58:17

Surveillance Watch – Una mappa che mostra le connessioni tra le aziende di sorveglianza

Le tecnologie di sorveglianza e gli #spyware vengono utilizzati per prendere di mira e sopprimere giornalisti, dissidenti e difensori dei diritti umani in tutto il mondo. Surveillance Watch è un database interattivo che documenta le connessioni nascoste all'interno dell'opaco settore della so…

2026-01-19 04:27:15

I decided it would be "fun" to install Koffan, the cool little shopping list app on my k3s cluster.

I created a manifest for flux and tested it on a placeholder hostname. Then I decided I should move it to an internal subdomain--and switch to using a wildcard certificate to minimize letsencrypt request for internal things. This meant I needed to install reflector to copy the wildcard cert to all the namespaces.

Once I deployed reflector, I had errors with slots…

2025-12-29 22:25:44

A chilly 36°F (2.2°C) but with sustained 19 mph (30.5 kph) winds gusting to 38 mph (61 kph) making it feel like 26°F (-3.3°C). Once or twice, I came around a corner and was nearly stopped in my tracks. I do love this chilly weather and saw several other runners also on #TeamShorts

Ran/walked 4/1 min intervals again, which feels mostly right at the minute — although I was fading to somethin…

2025-12-31 13:43:48

Health Stuff

Recovered somewhat from whatever that weird fit was at the end of last year.

NHS still has me on some sort of list I think, appointment might turn up next year. Last contact with them they were suggesting maybe November.

But the private treatment gave me the

MRI which

showed nothing weird and post-fit symptoms have been reducing.

Experimented with eating meat to see if that helped.

Tried eating meat for a month to see if it reduced the post-fit symptoms. Maybe it did? Tried vegetarian again and maybe it got worse?

Hard to tell with intermittent symptoms and a terrible memory.

Decided it's more likely to do good than harm (to me, entirely harm to the animals obviously), and eat meat till the end of the year.

While I'm better, I don't really feel fully recovered enough to want to change things so more meat next year I think.

One of those symptoms is that booze tolerance dropped to like zero. Very rarely had more than four pints in a day this year and very rarely more than one night a

week.

Much high number than the target there really.

Tripped over a guy-rope and fell onto the wrist.

Did two days of the festival and the drive home thinking it almost certainly wasn't broken and only confirmed it was when I got home and the hospital put it in a cast.

2026-03-19 19:25:29

B10 - Voice from the Past

ORAC: Requires minimum of two hours eradication therapy. Maximum five minute treatment periods interspersed with one hour rest periods. Hence, minimum rehabilitation period of twenty-six hours.

JENNA: [Sighs] Oh, no.

https://blake.torpidity.net/m/210/159 B7B5