2026-03-15 23:28:53

In the two weeks since the U.S. and Israel launched strikes on Iran,

Donald Trump increasingly has been knocked on his political heels.

He’s grown more agitated with news coverage

and has failed to find a way to explain why he started the war

— or how he will end it

— that resonates with a public concerned by American deaths in the conflict,

surging oil prices

and dropping financial markets.

Even some of his supporters are questioning his pla…

2026-04-14 14:22:42

So to follow up on this, I've caught it in action. Models, when quantized a bit, just do a bit more poorly with short contexts. Even going from f32 (as trained) to bf16 (as usually run) to q8 tends to do okay for "normal" context windows. And q4 you start feeling like "this model is a little stupid and gets stuck sometimes” (it is! It's just that it's still mostly careening about in the space of "plausible" most of the time. Not good guesswork, but still in the zone). With long contexts, the probability of parameters collapsing to zero are higher, so the more context the more likelihood you are to see brokenness.

And then at Q2 (2 bits per parameter) or Q1, the model falls apart completely. Parameters collapse to zero easily. You start seeing "all work and no play makes jack a dull boy” sorts of behavior, with intense and unscrutinized repetition, followed by a hard stop when it just stops working.

And quantization is a parameter that a model vendor can turn relatively easily. (they have to regenerate the model from the base with more quantization, but it's a data transformation on the order of running a terabyte through a straightforward and fast process, not like training).

If you have 1000 customers and enough equipment to handle the requests of 700, going from bf16 to q8 is a no-brainer. Suddenly you can handle the load and have a little spare capacity. They get worse results, probably pay the same per token (or they're on a subscription that hides the cost anyway so you are even freer to make trade-offs. There's a reason that subscription products are kinda poorly described.)

It's also possible for them to vary this across a day: use models during quieter periods? Maybe you get an instance running a bf16 quantization. If you use it during a high use period? You get a Q4 model.

Or intelligent routing is possible. No idea if anyone is doing this, but if they monitor what you send a bit, and you generally shoot for an expensive model for simple requests? They could totally substitute a highly quantized version of the model to answer the question.

There are •so many tricks• that can be pulled here. Some of them very reasonable to make, some of them treading into outright misleading or fraudulent, and it's weirdly hard to draw the line between them.

2026-02-16 09:08:40

2026-02-13 20:51:18

Git gurus: suppose I have a complex repository structure containing multiple levels of submodules.

We get frequent support tickets on github from users who failed to update one or more submodules when pulling the latest changes from the parent repo and get build issues or just don't incorporate the bug fix they're trying to test.

Is there a good way to fix/detect this in the build system? Like recursively follow .gitmodules and complain if the commit the parent wants isn&…

2026-03-13 22:35:16

One of my VR Lighthouses died last month. These things are gyroscopically spinning 24 hours a day for, what, a decade now? Nearly.

No wonder. Mostly the industry seems to be settling on using head-mounted cameras rather than sweeping infra-red beams and receptors on the head anyway.

It is true that lighthouses give accurate positioning, but means I can't easily take the headset next door, say. Or to a party.

So inside-out, as they call it, is fine for the headset now and mostly okay for the hand-controllers.

But it offers no solution at all for the foot-trackers and hip-tracker that I need for puppetting the characters in the #vr #slimeVR #trackers

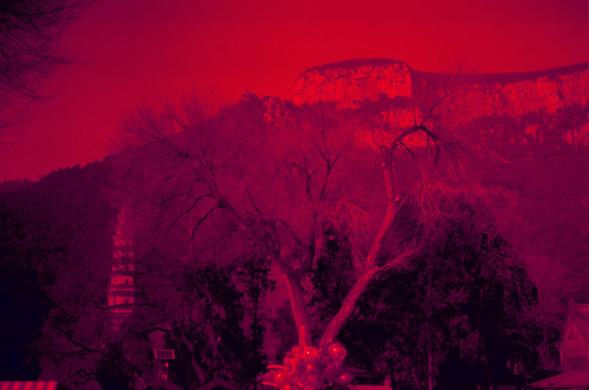

2026-03-07 11:00:02

Life Treats 🥇

生活奖励 🥇

📷 Nikon FE

🎞️ Ilford HP5 Plus 400, expired 1993

If you like my work, buy me a coffee from PayPal #filmphotography

2026-02-10 13:36:49

Series C, Episode 01 - Aftermath

AVON: I don't know; I never really had an offer I felt was worthy of me.

SERVALAN: Then why don't you see if you can find us a drink. We have things to talk about.

https://blake.torpidity.net/m/301/339 B7B3

2026-04-08 18:24:47

My daughter had her second complete game pitched yesterday, and a win. Our practice work is showing up on the field for sure now. Her weakness now is still too many balls thrown. The pitches that are in the strike zone come in fast and are hard to hit, or if hit they go nowhere. But yeah, getting there. We just keep on working on keeping her form the same. Super proud of her progress!

2026-01-27 13:12:55

Also: we're seeing what happens when white people are actually motivated en-masse (and the George Floyd response was actually another decent example of this).

General strike -> capitalist class goes "oh shit we need to deescalate" -> temporary reprieve.

White people actually putting their bodies on the line (or at least near enough to it that ICE killed them) got results. This is direct evidence of just how much oppression depends on the social fragmentation it invests immense energy into creating in order to not get its ass kicked both ideologically and literally.

Also for those white people like me who are scared to participate: I don't have the numbers, but there were something like 50,000 people who stood up (even if we just want to count observers and joiners-of-whistle-crowds I'd guess at least 5,000-10,000). Two in that category died (more like 30 have died in the direct-targets-of-ICE category). So don't look at Pretti and think "protesting is so risky." Consider that both the odds of being the one or two killed are low, and that if you don't stand up quickly and strongly enough against this shit, the body count will grow much higher.

This isn't over, and continued escalation and resistance is super critical now. Rather than hoping the twin cities story is a story of heroes elsewhere who solved the problem, make it a story of an inspiring example that gets replicated in LA, Chicago, and all around the nation where ICE is trying to metastasize into an unaccountable secret police.

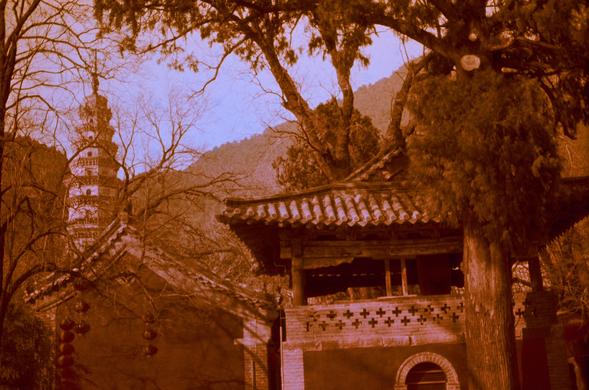

2026-04-05 00:49:02

Pagoda 7️⃣

浮屠 7️⃣

📷 Pentax MX

🎞️ Harman Red 125 (FF)

If you like my work, buy me a coffee or a roll of film from PayPal #filmphotography