2026-05-09 14:07:12

One thing that really annoys me as a cyclist and as a walker is, when a cyclist approaches from behind and trys to pass..

Next to a street with traffic,

with wind,

with a voice volume that's more like a shy cat.

And THEN getting angry if I don't give the space to pass!

Guys, you - are - NOT - audible!! And I have really good hearing!!

Either

- shout as if it's about your life or

- buy a simple bell (magic!)

- live with the fact t…

2026-06-08 23:07:44

RE: https://flipboard.com/@cbcnews/canada-1akomc2pz/-/a-y07zm2wGSTuMgOEJTVI_Jg:a:107108217-/0

I wrote to the office of the Mayor last week on that shameful behaviour.

The answer I got was super weird …

2026-03-10 07:06:14

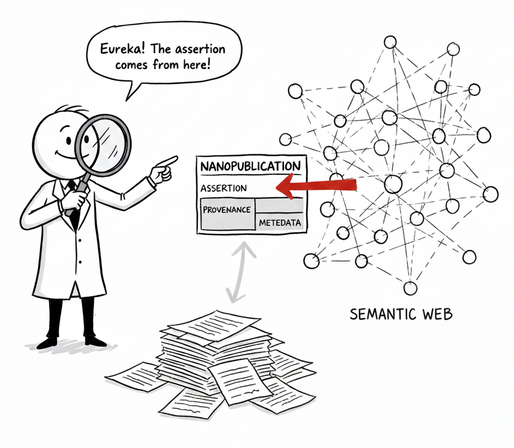

"What is a nanopublication really?" – by Rohitha Ravinder

https://medium.com/@rohitha0112/what-is-a-nanopublication-really-413cac6da0dd

2026-05-08 13:53:55

I’m not sure what sets us up to have better LMSes, but I don’t think institutions building them in-house is a viable answer.

One long-successful direction I like that has been gaining ground of late is course-specific web sites. I’m cheering the rise of static site generators, and hoping they continue to creep outside the confines of the techiest among us. A course site is not an LMS, but moving course •content• mostly out of the LMS simplifies the problem considerably!

/end

2026-05-08 01:24:51

Ask why, and know the answers are unsatisfying and contingent. It made sense at the time. Because we didn't understand yet. Because changing it would certainly break something else and we didn't know what yet and didn't have time to understand it. Yet.

Computers can be understood. Everything that is going on can be peeked at, prodded at, taken apart to know what is going on. Inside every complex system are simpler parts. The complexity comes from the combination of them, not mystery.

I cannot emphasize that enough. Just because you don't know, or nobody you know knows why something is how it is does not mean it is unknowable.

2026-04-08 16:33:39

Working on an #opensource project can be strange sometimes.

Personally, the strangest is seen when people want something changed.

Some peeps simply ask. (Yes, do this one)

Some get extremely angry that it isn't already like they want and demand it be changed.

Some people try to pay for the change.

Some try to guilt you to make the change by saying other…

2026-06-05 21:01:00

Punk is the way.

"...stop wasting time, energy, and emotions inside the gigantic, vacant '80s malls all social networks have become."

https://brilliantcrank.com/punk-is-the-way/?ref=brilliantcrank-newsletter

2026-05-06 05:13:41

Here's an answer for a life-changing technology that truly stands out:

"The Bicycle

Selected by Reshma Saujani

In the 1890s, the bicycle, as we know it today, finally let women go where they wanted, on their own, without asking permission. It even played a central role in the fight for women’s suffrage—a simple machine with outsized impact. Today, it reminds us what technology should do: expand freedom and opportunity. Millions of American women are still fighting f…

2026-03-28 16:03:27

No they want your DNA to track you.

Folks, have you seen GATTACA?

▶️ U.S. lawmakers demand answers after Canadian man says border officers made him give DNA sample | CBC News

https://www.cbc.ca/news/canada/windsor/us-bord…

2026-06-10 04:05:09

Edit: question answered successfully! Ty!

OK folks, I need some #docker advice for those gurus out there. A boost would be appreciated to get it in front of knowledgeable eyes.

I have a container running postgresql for mastodon. I would like to tweak the conf file for postgresql now that I have things going.

What is the safest way to do this on a running container?

Can I stop the instance with docker stop and then restart it and it will simply see the new postgresql.conf specified in the original docker run command?

I'm getting more comfortable with docker.. but not *that* comfortable, so I'm still worried that if I restart it'll break somehow lol.

I want to make sure I do this right so I don't wreck what appears to be a running and reasonably happy container :)

I'm running docker in a colima virtual machine on macOS.

#mastoAdmin #docker #colima #postgresql