2026-02-20 12:46:00

This is "interesting" - In a civil trial in which Elon M. is the defendant they are having difficulty finding enough unbiased jurors to empanel a jury:

Well, that's one way to evade liability: become so hated that there aren't enough people who don't hate your guts to form a jury.

"Musk’s Twitter Trial Gets Jurors Who Can Set Aside Feelings"

2026-04-15 11:54:35

2026-03-26 11:45:57

The European Commission launches a DSA investigation into Snapchat for failing to protect children, including assessing its age verification measures (Eliza Gkritsi/Politico)

https://www.politico.eu/article/eu-probes-snapchat-for-fa…

2026-03-30 18:41:21

2026-03-09 21:40:45

Filing: Anthropic says it had $5B in all-time revenue since 2023 and could lose billions after clients paused deal talks due to supply-chain risk designation (Paresh Dave/Wired)

https://www.wired.com/story/anthropic-claims-business-is-…

2026-03-31 14:54:42

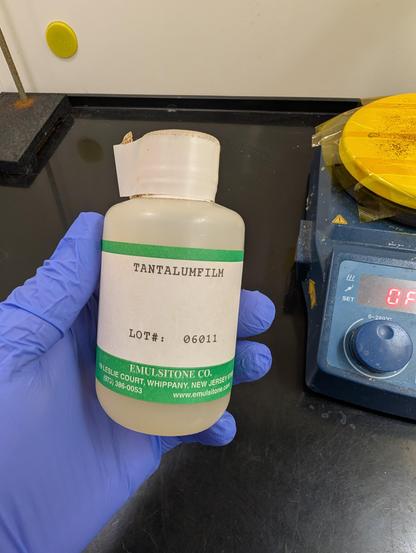

Still as cis as ever, but I thought I'd show my support by posting an interesting transition metal compound from my lab inventory.

Here's a solution of tantalum chloride in ethanol/methanol, intended for sol-gel deposition of tantalum pentoxide thin films. You spin coat it on a substrate then heat in air; the chlorine swaps with an oxygen in atmospheric water vapor and you get HCl gas evaporating and Ta2O5 on the surface.

2026-03-23 02:32:42

that bittersweet feeling of opening a used book & finding a sweet bookmark from a long-departed bookstore in a faraway place (closed in 1999, according to reddit) #books