Content warning: AI Bubble gossip

Quote of Carole Cadwalladr @… post, Monday 17th Nov 25, "Peter Thiel gets out of Dodge",

itself quoting...

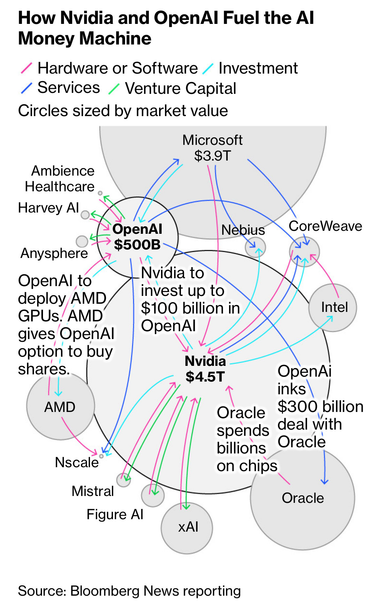

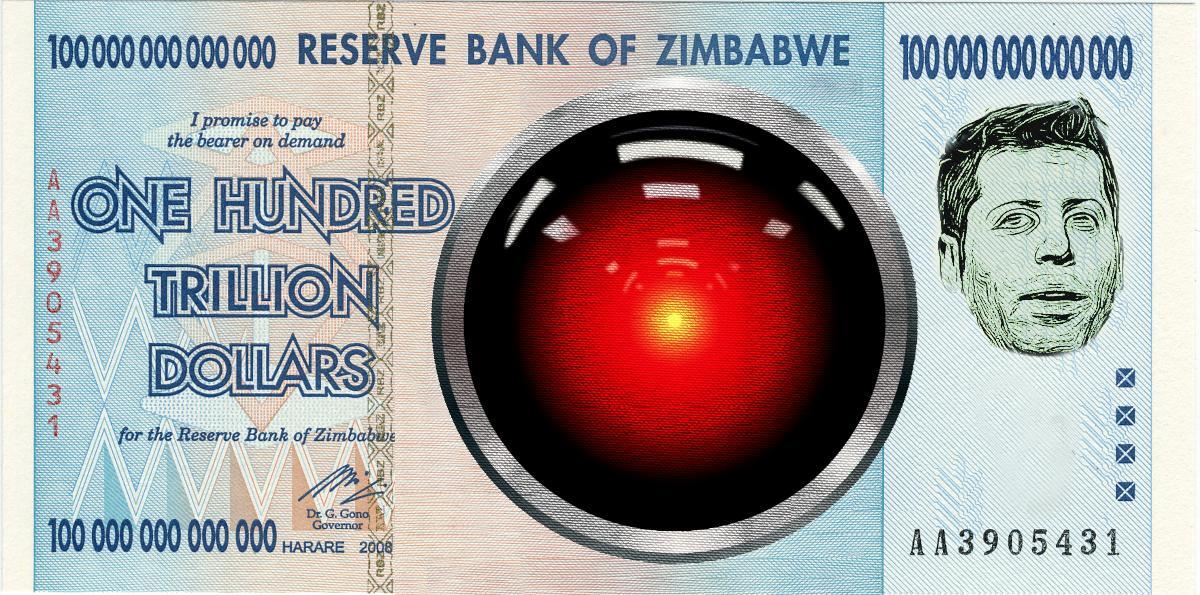

“The CFO of OpenAI, Sarah Friar, gave the game away a week ago when she said that she was thinking that there was a role for the government to play in backstopping the future investments of companies like OpenAI, that is a terrible idea.[…]

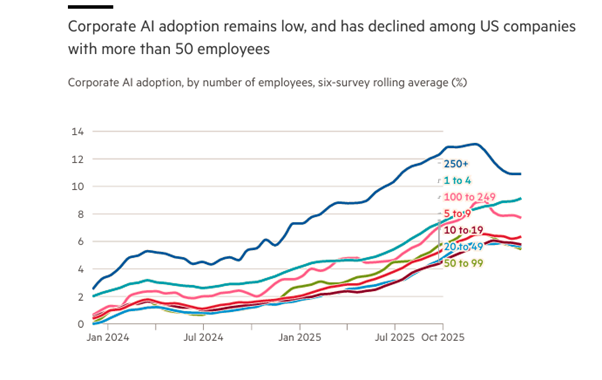

You know, everybody on Wall Street is excited about this #bubble. 34% of the market value is in the big companies. The private ones have notional values that would make them, you know, Fortune 200 companies. ...."

Investors have to take the hit, not taxpayers/public services.

I have seen this film before.

I still don't like the ending.

➡️ #Edinburgh

Edited to add tag