Interesting LLM nuance: why does using phrases like "you're a pen-tester" cause chatbots to emit substantially different predictions, and basically "follow" that instruction? Because of how LLMs work, this implies that the training data has plenty of examples where real humans told each other that they were some role and the humans just immediately jumped into that role without question or intervening dialogue. But that's not something people do in normal conversation. Even in playing-with-kids contexts if you drop that out of the blue you're probably going to get "no I want to be a robot" or "but you were the elephant last time!" rather than immediate assumption of the assigned role.

It's possible that training LLMs to predict immediate role-assumption is something the big models spent a lot of manual effort on. But what I think is more likely is: it's the legacy of role-play forums! All those reams of pages of teenagers (yes, often horny) pretending to be Captain Kirk or their own incredibly cringe "cool" character (but honestly, why call it cringe, let kids be kids and have fun)...

So next time you "tell" a chatbot "you're a..." to get it to do what you want, I'm pretty sure you have an RP forum teen from the past to thank :)

#AI #LLMs

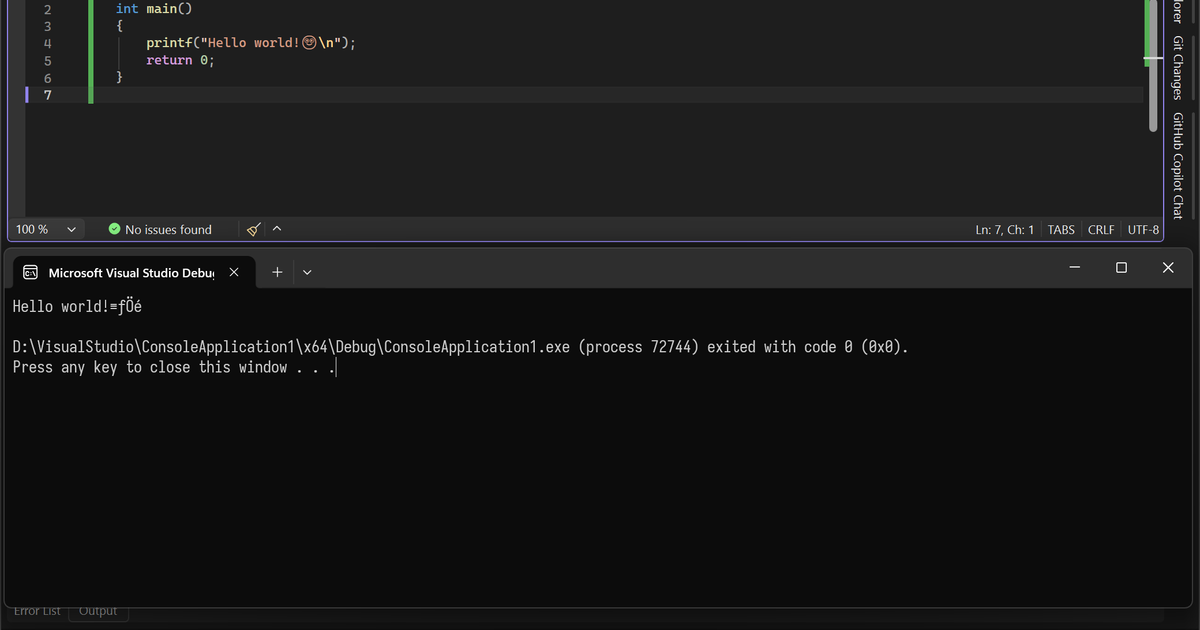

![immich_server | [Nest] 7 - 03/27/2026, 3:20:35 PM LOG [Microservices:MapRepository] Empty response from database for city reverse geocoding lat: 64.978553, lon: -21.063319. Likely cause: no nearby large populated place (500+ within 25km). Falling back to country boundaries.

repeated several times](https://assets.chaos.social/media_attachments/files/116/301/822/099/590/279/small/545e1967e3137124.png)