2026-06-01 14:32:49

2026-06-01 14:32:49

2026-06-01 14:28:37

2026-03-30 13:40:13

Fun playing ARC-AGI-3 , puzzles that the most advanced AI-models can only solve for 1% 😀

Illustrates how AI models look extremely smart but are at the same time quite dumb.

#AI

2026-03-31 21:42:15

Yesterday, I got an incredible opportunity to watch Cindy Cohn bring @…'s story to one of its biggest audiences yet, as she did an extraordinary job on the Daily Show, pulling off the unlikely task of making the topic of digital privacy and civil liberties seem fun, engaging and witty. I took the chance to share some reflections — and a couple of behind-the-scenes ph…

2026-05-01 17:15:00

Apropos of this second-latest comment on this fascinating thread, if your argument boils down to stuff that was removed from a draft spec three years ago, then your argument might be moot:

https://github.com/w3c/wcag3/issues/642#issuecomment-4360244086

Because…

2026-05-01 18:20:39

'I have heard it said, more than once, in research contexts, that an LLM can do some task “better than a graduate student.” That language makes me uncomfortable, because it is taking an extremely instrumental view of graduate students. Are graduate students in our groups and our laboratories and our universities to do work? Or are they here to learn? I very much hope the latter, or, if they are here to work, it is because that work is also critical to their learning' - David Hogg

https://arxiv.org/abs/2602.10181

2026-04-01 12:16:31

So many videos on #YouTube are fucking unwatchable these days because words advertisers don't like are either replaced with the corporate newspeak Stephanie is talking about or cut out in the same way they would be beeped out in television, just instead of the beep the word is cut entirely.

I know getting a Youtube replacement rolling is a Herculean task for a plethora of reasons but h…

2026-05-01 08:08:23

On Agentic Behavioral Modeling

Dirk Ostwald, Rasmus Bruckner, Franziska Us\'ee, Belinda Fleischmann, Joram Soch, Sean Mulready

https://arxiv.org/abs/2604.27894 https://arxiv.org/pdf/2604.27894 https://arxiv.org/html/2604.27894

arXiv:2604.27894v1 Announce Type: new

Abstract: Integrating theoretical neuroscience, decision theory, and probabilistic inference offers a promising route to understanding human cognition, yet concrete methodological bridges between agentic AI models and behavioral data analysis remain formally underdeveloped. We advance this synthesis under the framework of agentic behavioral modeling (ABM), which treats artificial agents as latent, generative hypotheses about cognitive mechanisms and evaluates them by their statistical adequacy in explaining human behavior. After outlining its conceptual foundations, we apply the framework to two minimal laboratory paradigms: a binary perceptual contrast-discrimination task and a symmetric two-armed bandit learning task. We formalize each task-agent-data system as a joint probability model, derive explicit conditional log-likelihoods for behavioral inference, validate different model variants using model and parameter recovery simulations, and evaluate them in light of empirical data. Using these minimal examples, we provide an agent-centric interpretation of the psychometric function, derive optimal policies for both tasks, and show the equivalence between Rescorla-Wagner learning and Bayesian inference in symmetric bandits. More broadly, this work may serve as a conceptual and practical foundation for applying ABM to cognitive behavioral science.

toXiv_bot_toot

2026-05-31 20:59:01

Task avoidance level 3 engaged: sending "catch up" emails to friends I haven't talked to in over 6 months.

2026-03-22 03:55:55

Hands-on with Gemini task automation on mobile: it's super impressive despite being very slow and failing at some tasks; it can order food, book Ubers, and more (Allison Johnson/The Verge)

https://www.theverge.com/tech/898282/gemini-task-automation-uber…

2026-03-31 09:30:12

Democratizing Federated Learning with Blockchain and Multi-Task Peer Prediction

Leon Witt, Kentaroh Toyoda, Wojciech Samek, Dan Li

https://arxiv.org/abs/2603.28434 https://

2026-03-31 10:11:22

Structural-Ambiguity-Aware Translation from Natural Language to Signal Temporal Logic

Kosei Fushimi, Kazunobu Serizawa, Junya Ikemoto, Kazumune Hashimoto

https://arxiv.org/abs/2603.28426 https://arxiv.org/pdf/2603.28426 https://arxiv.org/html/2603.28426

arXiv:2603.28426v1 Announce Type: new

Abstract: Signal Temporal Logic (STL) is widely used to specify timed and safety-critical tasks for cyber-physical systems, but writing STL formulas directly is difficult for non-expert users. Natural language (NL) provides a convenient interface, yet its inherent structural ambiguity makes one-to-one translation into STL unreliable. In this paper, we propose an \textit{ambiguity-preserving} method for translating NL task descriptions into STL candidate formulas. The key idea is to retain multiple plausible syntactic analyses instead of forcing a single interpretation at the parsing stage. To this end, we develop a three-stage pipeline based on Combinatory Categorial Grammar (CCG): ambiguity-preserving $n$-best parsing, STL-oriented template-based semantic composition, and canonicalization with score aggregation. The proposed method outputs a deduplicated set of STL candidates with plausibility scores, thereby explicitly representing multiple possible formal interpretations of an ambiguous instruction. In contrast to existing one-best NL-to-logic translation methods, the proposed approach is designed to preserve attachment and scope ambiguity. Case studies on representative task descriptions demonstrate that the method generates multiple STL candidates for genuinely ambiguous inputs while collapsing unambiguous or canonically equivalent derivations to a single STL formula.

toXiv_bot_toot

2026-05-26 03:20:31

Vance to host state AGs at White House for fraud task force meeting (Jacob Wendler/Politico)

https://www.politico.com/news/2026/05/25/vance-state-attorneys-general-fraud-task-force-00935539

http://www.memeorandum.com/260525/p62#a260525p62

2026-05-27 13:56:05

#superproductivity app is great. There aren't many apps I can run on my locked down computer at work. But this one is possible to sync via webdav so I installed a minimal webdav just to syncronize the json and md file the app generates. It work flawlessly! I have finally found a way to take my todo's between work and home.

Age makes remembering things more and more tr…

2026-04-16 18:57:26

Trump’s Memphis Crime Task Force Arrested Over 800 Immigrants, Records Show.

💥Only 2% of the Arrests Were for Violent Crimes.

Trump ordered a law enforcement surge in Memphis to end violent crime. -- But crime in the city has fallen steadily since 2023, hitting a 25-year low before the surge began.

The vast majority of the more than 5,200 arrests made by the Memphis Safe Task Force in its first four months have been for nonviolent crimes.

Of the task force’s 800 imm…

2026-05-29 19:46:13

"Starting with Values: Introducing Our AI Engagement Framework" @ ZSR Library, Wake Forest University

https://zsr.wfu.edu/2026/starting-with-values-introducing-our-ai-engagement-framework/

[via

2026-05-29 21:05:52

Just the News founder John Solomon says he is being vetted for a short-term White House role; source: he would lead a task force to declassify documents (New York Times)

https://www.nytimes.com/2026/05/29/us/politics/john-solomon-trump-white-house.html

2026-05-28 11:14:37

A productive morning at the psychology coalface! Completed a deck for a workshop, listed a new masterclass online, and published my monthly newsletter.

If you're interested, you can read (and subscribe!) here:

https://www.worklifepsych.news/you-are-not-your-task-list/…

2026-05-26 13:06:00

does anybody happen to know if the results of the #europeana Linked Data Task Force have been published somewhere?

This page here https://pro.europeana.eu/index.php/projec…

2026-05-26 11:30:24

Vision-restricted dual-task training as a novel intervention to improve cognitive performance and quality of life in type 2 diabetes: A randomized controlled trial https://journals.lww.com/jehp/fulltext/2026/05070/vision_restricted_dual_ta…

2026-04-28 16:19:21

My current task for our #VFXPipeline is to accomodate Windows users in a Linux pipeline. Easiest option: give every Photoshop artist a Linux workstation for Nuke. Seems to be a common thing. But out of curiosity (and to be prudent with hardware) I‘m trying to get everything working on Windows. A constant source of sadness I have to say, worse than UTF8 strings in Python 2.

2026-05-30 06:01:30

2026-05-22 03:31:38

Kennedy fires heads of preventive health screening task force | LiveNOW from FOX

https://www.livenowfox.com/news/rfk-jr-fires-heads-preventive-health-screening-task-force

2026-04-23 19:48:34

LLMs Corrupt Your Documents When You Delegate

"Delegation requires trust - the expectation that the LLM will faithfully execute the task without introducing errors into documents. We introduce DELEGATE-52 to study the readiness of AI systems in delegated workflows. DELEGATE-52 simulates long delegated workflows that require in-depth document editing across 52 professional domains, such as coding, crystallography, and music notation. Our large-scale experiment with 19 LLMs reveals …

2026-05-29 15:53:05

Still enjoying Linux desktop but some edges are rough. Today's goal: play the new Boards of Canada album from the download I just bought.

The good thing about Linux is there's almost always some workaround, there must be 100 ways to play music in this environment. It's just comical than this simple task is so complicated.

2026-05-13 20:21:05

“Four Memphis residents filed suit in federal court to stop the Memphis Safe Task Force from retaliating against them for exercising their First Amendment right to film the Task Force’s immigration and law enforcement activity.“

https://www.

2026-04-28 19:37:24

An outstanding article, and it's not about AI. It's about added value and doing business:

"A marketing manager with no engineering background opens Cursor on Monday morning. By Wednesday afternoon, she has a working customer-facing app. It looks polished. It performs the core task. She demos it to her VP, who forwards it to their CMO, who then shows it in the executive staff meeting as evidence that the team is “moving at AI speed.”

By Friday, it is in front of custo…

2026-04-27 07:15:23

“In this work, we conduct a large-scale simulation of how users might delegate work to LLMs across 52 professional domains. We find that current LLMs are unreliable delegates: even frontier models corrupt an average of 25% of document content over long workflows, with sparse but severe errors that silently compound over time.”

Good to see the issue addressed explicitly, even though the results aren’t surprising—why would anyone expect LLMs to be reliable!?

2026-05-21 09:03:23

"The Pentagon’s cyber-warfighting arm is launching a task force to speed up the adoption of cutting-edge artificial intelligence tools with powerful hacking capabilities, according to three people with knowledge of the effort."

https://www.politico.com/news/2026/05/20/n

2026-04-28 18:18:47

Writing an ERWC-Style Module: Choosing Texts

I have written four ERWC modules and substantially revised several more. Most of my own modules turned out to be about full-length works, including Ursula Le Guin’s The Left Hand of Darkness, George Orwell’s 1984, and Aldous Huxley’s Brave New World. Writing a module around a novel is an interesting, complex, and time-consuming task. I will take that up later.

2026-03-30 00:20:40

Midjourney CEO David Holz says the company's revenue "significantly surpassed" $200M in 2023, and has "gone up" since then, despite its declining web traffic (Jemima McEvoy/The Information)

https://www.theinformation.com/articles/mi

2026-03-21 17:57:10

New Bingo Boys album just came out today, and of COURSE it's a ripper.

#punk

2026-05-29 18:54:47

NASA awarded Jeff Bezos' Blue Origin an initial $188 million contract

to get its robotic Blue Moon Mark 1 lander ready to deliver lunar terrain vehicles, or LTVs,

with an option period worth an additional $280.4 million for two task orders.

Carlos Garcia-Galan, program manager for NASA’s Moon Base program, said the LTVs will be

“a mix between the Apollo lunar roving vehicle and the Mars-style rover.”

Each rover will weigh a little less than one metric ton, …

2026-05-01 09:01:47

Replaced article(s) found for q-bio.NC. https://arxiv.org/list/q-bio.NC/new

[1/1]:

- Effect of an auditory static distractor on the perception of an auditory moving target

Noa Kemp, Cynthia Tarlao, Catherine Guastavino, B. Suresh Krishna

https://arxiv.org/abs/2510.25119 https://mastoxiv.page/@arXiv_qbioNC_bot/115462256692285649

- Cortex-Inspired Continual Learning: Unsupervised Instantiation and Recovery of Functional Task Ne...

Kevin McKee, Thomas Hazy, Yicong Zheng, Zacharie Bugaud, Thomas Miconi

https://arxiv.org/abs/2604.24637 https://mastoxiv.page/@arXiv_csLG_bot/116481549483167923

toXiv_bot_toot

2026-05-27 11:25:10

Sonnet 072 - LXXII

O! lest the world should task you to recite

What merit lived in me, that you should love

After my death,--dear love, forget me quite,

For you in me can nothing worthy prove.

Unless you would devise some virtuous lie,

To do more for me than mine own desert,

And hang more praise upon deceased I

Than niggard truth would willingly impart:

O! lest your true love may seem false in this

That you for love speak well of me…

2026-03-31 11:13:03

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[4/5]:

- Retrieving Climate Change Disinformation by Narrative

Upravitelev, Solopova, Jakob, Sahitaj, M\"oller, Schmitt

https://arxiv.org/abs/2603.22015 https://mastoxiv.page/@arXiv_csCL_bot/116283633674519408

- PaperVoyager : Building Interactive Web with Visual Language Models

Dasen Dai, Biao Wu, Meng Fang, Wenhao Wang

https://arxiv.org/abs/2603.22999 https://mastoxiv.page/@arXiv_csCL_bot/116289015432093128

- Continual Robot Skill and Task Learning via Dialogue

Weiwei Gu, Suresh Kondepudi, Anmol Gupta, Lixiao Huang, Nakul Gopalan

https://arxiv.org/abs/2409.03166 https://mastoxiv.page/@arXiv_csRO_bot/113089412115632702

- Shifting Perspectives: Steering Vectors for Robust Bias Mitigation in LLMs

Zara Siddique, Irtaza Khalid, Liam D. Turner, Luis Espinosa-Anke

https://arxiv.org/abs/2503.05371 https://mastoxiv.page/@arXiv_csLG_bot/114136994263573386

- SkillFlow: Scalable and Efficient Agent Skill Retrieval System

Fangzhou Li, Pagkratios Tagkopoulos, Ilias Tagkopoulos

https://arxiv.org/abs/2504.06188 https://mastoxiv.page/@arXiv_csAI_bot/114306773220502860

- Large Language Models for Computer-Aided Design: A Survey

Licheng Zhang, Bach Le, Naveed Akhtar, Siew-Kei Lam, Tuan Ngo

https://arxiv.org/abs/2505.08137 https://mastoxiv.page/@arXiv_csLG_bot/114504972217393639

- Structured Agent Distillation for Large Language Model

Liu, Kong, Dong, Yang, Li, Tang, Yuan, Niu, Zhang, Zhao, Lin, Huang, Wang

https://arxiv.org/abs/2505.13820 https://mastoxiv.page/@arXiv_csLG_bot/114544636506163783

- VLM-3R: Vision-Language Models Augmented with Instruction-Aligned 3D Reconstruction

Fan, Zhang, Li, Zhang, Chen, Hu, Wang, Qu, Zhou, Wang, Yan, Xu, Theiss, Chen, Li, Tu, Wang, Ranjan

https://arxiv.org/abs/2505.20279 https://mastoxiv.page/@arXiv_csCV_bot/114578817567171199

- Learning to Diagnose Privately: DP-Powered LLMs for Radiology Report Classification

Bhattacharjee, Tian, Rubin, Lo, Merchant, Hanson, Gounley, Tandon

https://arxiv.org/abs/2506.04450 https://mastoxiv.page/@arXiv_csCR_bot/114635189706505648

- L-MARS: Legal Multi-Agent Workflow with Orchestrated Reasoning and Agentic Search

Ziqi Wang, Boqin Yuan

https://arxiv.org/abs/2509.00761 https://mastoxiv.page/@arXiv_csAI_bot/115140304787881576

- Your Models Have Thought Enough: Training Large Reasoning Models to Stop Overthinking

Han, Huang, Liao, Jiang, Lu, Zhao, Wang, Zhou, Jiang, Liang, Zhou, Sun, Yu, Xiao

https://arxiv.org/abs/2509.23392 https://mastoxiv.page/@arXiv_csAI_bot/115293169353788311

- Person-Centric Annotations of LAION-400M: Auditing Bias and Its Transfer to Models

Leander Girrbach, Stephan Alaniz, Genevieve Smith, Trevor Darrell, Zeynep Akata

https://arxiv.org/abs/2510.03721 https://mastoxiv.page/@arXiv_csCV_bot/115332690912652473

- Agentic Context Engineering: Evolving Contexts for Self-Improving Language Models

Zhang, Hu, Upasani, Ma, Hong, Kamanuru, Rainton, Wu, Ji, Li, Thakker, Zou, Olukotun

https://arxiv.org/abs/2510.04618 https://mastoxiv.page/@arXiv_csLG_bot/115332999596603375

- Mitigating Premature Exploitation in Particle-based Monte Carlo for Inference-Time Scaling

Giannone, Xu, Nayak, Awhad, Sudalairaj, Xu, Srivastava

https://arxiv.org/abs/2510.05825 https://mastoxiv.page/@arXiv_csLG_bot/115338159696513898

- Complete asymptotic type-token relationship for growing complex systems with inverse power-law co...

Pablo Rosillo-Rodes, Laurent H\'ebert-Dufresne, Peter Sheridan Dodds

https://arxiv.org/abs/2511.02069 https://mastoxiv.page/@arXiv_physicssocph_bot/115496283627867809

- ViPRA: Video Prediction for Robot Actions

Sandeep Routray, Hengkai Pan, Unnat Jain, Shikhar Bahl, Deepak Pathak

https://arxiv.org/abs/2511.07732 https://mastoxiv.page/@arXiv_csRO_bot/115535941444003568

- AISAC: An Integrated multi-agent System for Transparent, Retrieval-Grounded Scientific Assistance

Chandrachur Bhattacharya, Sibendu Som

https://arxiv.org/abs/2511.14043

- VideoARM: Agentic Reasoning over Hierarchical Memory for Long-Form Video Understanding

Yufei Yin, Qianke Meng, Minghao Chen, Jiajun Ding, Zhenwei Shao, Zhou Yu

https://arxiv.org/abs/2512.12360 https://mastoxiv.page/@arXiv_csCV_bot/115729238732682644

- RadImageNet-VQA: A Large-Scale CT and MRI Dataset for Radiologic Visual Question Answering

L\'eo Butsanets, Charles Corbi\`ere, Julien Khlaut, Pierre Manceron, Corentin Dancette

https://arxiv.org/abs/2512.17396 https://mastoxiv.page/@arXiv_csCV_bot/115762705911757243

- Measuring all the noises of LLM Evals

Sida Wang

https://arxiv.org/abs/2512.21326 https://mastoxiv.page/@arXiv_csLG_bot/115779597137785637

toXiv_bot_toot

2026-04-25 21:58:56

"“Can AI do this task?” is a useful starting point for thinking about how it might impact employment, but it is an ambiguous signal that forms only one part of a large and complex picture" -- John Burn-Murdoch

FT: https://www.ft.com/content/f55c4eba-6e10-4

2026-03-25 22:02:31

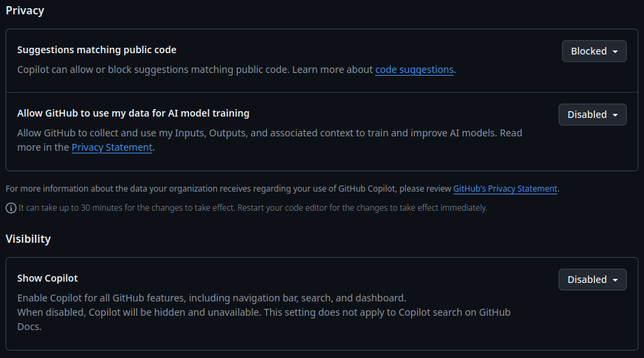

Your task for today:

Opt out of #Copilot, because #Microslop forces you into it soon otherwise.

https://github.com/settings…

2026-05-20 11:27:19

Google has re-engineered its search engine to keep users inside its own ecosystem with AI-powered interactive experiences. If your task is to find and critically assess information on the open web, you're fresh out of luck.

https://www.

2026-04-14 20:26:26

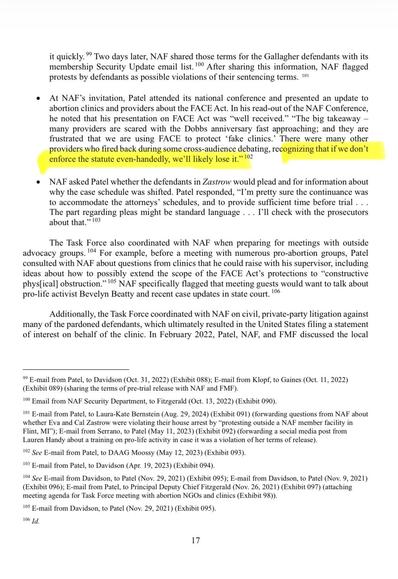

Only work-product of 45’s DoJ task force about weaponization of DoJ by Biden is a report with thesis Biden DoJ enforced the FACE Act (meant to stop attacks or blocking health clinics) unevenly, focusing mainly on anti-choice people/orgs.

On page 17 it points out how the Biden DoJ enforced evenly, and intended to keep the law in place. They unmake their thesis (and the Task Force’s purpose). The current DoJ has convinced me Biden’s DoJ was not weaponized.

2026-05-22 15:52:56

To be fair, competent plagiarism is a nontrivial task. It takes skill for a human to do: finding relevant fragments from an input dataset and stitching those fragments together in a well-formed way is not nothing! It’s impressive and interesting that people have figured out how to make machines do it.

But •that• is not the promise on which investors are valuing the AI megajuggernaut at trillions of dollars.

4/

2026-05-24 15:56:19

Current procrastination levels: I finished 5 books this week.

Now I guess I must actually do the task.

2026-03-24 17:38:49

Trump Says He Made Memphis Safer. Locals Told Me It Felt Like 1930s Germany or North Korea. – Mother Jones

https://www.motherjones.com/politics/2026/03/trump-memphis-safe-task-force-local-residents-repressive-police-state/

2026-03-29 21:20:51

Trump insiders explode over Stephen Miller's shadow rule... and reveal how 'puppet master' overrides the president: 'He needs to be fired'

Donald Trump's brass-knuckles enforcer Stephen Miller played a pivotal role in Kristi Noem's downfall.

Now her successor, another novice to the Department of Homeland Security, faces an equally perilous task to lead the mass deportation agenda with one of the President's most powerful aides breathing down his n…

2026-03-26 21:58:42

Not sure how I feel about Claude now. In a about 15 minutes it finished the task I spent several hours of a trial and error to complete, but was able to describe the problem preety well. At the same time it fucked up a git rebase of a single small commit.

2026-04-22 20:54:50

2026-04-23 17:10:46

Emergent #Misalignment: Narrow #finetuning can produce broadly misaligned #LLMs

2026-04-27 11:58:40

AI tools create codebases where every task becomes a yak shave. And the solution these tools bring to that problem is a lawn mower.

2026-05-19 15:37:57

2026-05-21 00:26:03

Sources: the Pentagon is launching a task force to study how to safely deploy leading AI tools with hacking capabilities across Cyber Command and NSA missions (Politico)

https://www.politico.com/news/2026/05/20/nsa-cyber-command-ai-task-force-mythos-0…

2026-05-18 22:40:40

House Democrats' Litigation Task Force Fights to Block Trump's Self-Dealing Settlement in Sham $10 Billion IRS Lawsuit (House Committee on the Judiciary)

https://democrats-judiciary.house.gov/media-center/press-releases/house-democrats-litigation-task-force-fights-to-block-trump-s-self-dealing-settlement-in-sham-10-billion-irs-lawsuit

http://www.memeorandum.com/260518/p115#a260518p115

2026-05-13 14:24:53

A use of an #llm in a boring part of an interesting task; here's my prompt:

This is a C coding task. The file declares two classes, ScDPResultMember and ScDPResultMemberShim, where ScDPResultMemberShim just calls member functions in ScDPResultMember. Modify any member of ScDPResultMemberShim which calls a method that modifies the underlying ScDPResultMember so that instead of using mpM…

2026-05-19 14:42:02

from my link log —

Reducing tail latencies with automatic yielding in Tokio on Rust.

https://tokio.rs/blog/2020-04-preemption/

saved 2020-04-03 https://

2026-04-22 17:50:54

2026-04-10 08:17:06

I really dont understand the infatuation people have with this 'task' command, the UX is so so poor.

$ task -l

* build-bundles: Build testing bundles

$ cat taskfile.yaml | yq '.tasks|keys'

- b

- fast-bench

- bench

- bench-graph

....28 more

Ridiculous

2026-05-21 21:09:39

My interview with Bruno, the software engineer of Uruky, a privacy search engine!

https://theprivacydad.com/interview-with-the-engineer-of-uruky-a-private-search-engine/

Bringing a privacy-first search tool to market is a challenging b…

2026-03-17 14:51:41

Even at an extraordinarily smart event as #FOSSBackstage , people keep asking "Yeah but couldn't we do this [task that requires care, understanding and commitment] with generative AI?" and I am f*ing sick of it. If the task could not have been automated before the advent of "AI", then it should not be automated now. Just be a decent person put in the human work alrea…

2026-05-26 21:18:43

2026-04-23 04:33:25

Five thirty am, Thursday. Day off. Wide awake. The foremost task is brewing coffee.

2026-04-17 08:56:20

Someone in another country apparently gave their students the task to reproduce one of our studies but gave them no guidance on how to do it 😬 I'm really sorry, not-my-students but I can't give you individual tutoring on experimental methods, data analysis, Python and statistics this week. Sorry your prof sucks 🙃 #academicChatter

2026-05-23 04:07:14

2026-05-19 15:02:49

Meanwhile, on the Python/Django side of life… Over the past few evenings I’ve made numerous updates and bug fixes to my reusable, pluggable, multi-user/multi-group task assignment system for Django. Live on the demo site and installable now. Hope it’s useful!

https://django-todo.org/

2026-05-27 10:05:54

Datacurve releases the DeepSWE coding benchmark, a 113-task test across 91 open-source repositories and five languages, and says GPT-5.5 is the leader at 70% (Michael Nuñez/VentureBeat)

https://venturebeat.com/technology/dee…

2026-04-02 10:05:48

BBC sources reflect on Tim Davie's DG tenure, mired in impartiality disputes but succeeding in a cultural transformation, as Matt Brittin takes over on May 18 (Jake Kanter/Deadline)

https://deadline.com/2026/04/bbc-tim-davie-legacy-task-ahead-matt-br…

2026-03-27 08:44:52

Automating Computational Chemistry Workflows via OpenClaw and Domain-Specific Skills

Mingwei Ding, Chen Huang, Yibo Hu, Yifan Li, Zitian Lu, Xingtai Yu, Duo Zhang, Wenxi Zhai, Tong Zhu, Qiangqiang Gu, Jinzhe Zeng

https://arxiv.org/abs/2603.25522 https://arxiv.org/pdf/2603.25522 https://arxiv.org/html/2603.25522

arXiv:2603.25522v1 Announce Type: new

Abstract: Automating multistep computational chemistry tasks remains challenging because reasoning, workflow specification, software execution, and high-performance computing (HPC) execution are often tightly coupled. We demonstrate a decoupled agent-skill design for computational chemistry automation leveraging OpenClaw. Specifically, OpenClaw provides centralized control and supervision; schema-defined planning skills translate scientific goals into executable task specifications; domain skills encapsulate specific computational chemistry procedures; and DPDispatcher manages job execution across heterogeneous HPC environments. In a molecular dynamics (MD) case study of methane oxidation, the system completed cross-tool execution, bounded recovery from runtime failures, and reaction network extraction, illustrating a scalable and maintainable approach to multistep computational chemistry automation.

toXiv_bot_toot

2026-03-22 13:23:02

Been another eventful week around the "new" house. Got the shed built. What a pain in the ass metal sheds are. This one was no different. Shout-out to whoever decided it was a great idea to plastic wrap every painted panel like they were PC case panels. I hope you stub a pinky toe every other day for the rest of your life.

With that done, next task was to put up the shelf frames I brought with me. Was able to get 3 shelves cut out of the former back porch ramp plywood. Got 3…

2026-03-17 00:08:10

DOGE 2.0:

Trump brings ‘war on fraud’ into focus with task force of benefits-paying agencies | Federal News Network

https://federalnewsnetwork.com/agency-oversight/2026/03/trump-brings-war-on-fraud-into-focus-with-task-force-of-benefits-paying-agencies/

2026-05-15 19:21:34

#TIL Since :git: version 2.5 from 2015 (!) there is the `git worktree` command.

It lets you checkout a branch in a parallel directory which can be super useful if you have to interrupt your current task but do not want to commit or stash.

This tutorial was helpful to me:

2026-03-17 23:42:01

Help us shape the Guardian Climate Forum 2026 #climate

2026-05-27 06:07:18

2026-05-22 15:39:06

RE: https://unstable.systems/@jneen/116618931097778342

Worth looking at both the quoted text here and •especially• the linked page, which is quite good.

I’ll add another item of my own. The first screenshot mentions giving an LLM the task of “implementing an HTTP server in JavaScript from scratch” in 90 minutes. Sounds impressive, right? Until you remember that every open-source Javascript HTTP server in existence ••was in the training data••.

1/

2026-03-24 18:16:03

Exploring the use of VLMs for navigation assistance for people with blindness and low vision #LLM

2026-04-26 19:28:17

Large Language Models (LLMs) are poised to disrupt knowledge work,

with the emergence of delegated work as a new interaction paradigm

(e.g., vibe coding).

Delegation requires trust

- the expectation that the LLM will faithfully execute the task without introducing errors into documents.

Our large-scale experiment with 19 LLMs reveals that current models degrade documents during delegation:

even frontier models (Gemini 3.1 Pro, Claude 4.6 Opus, GPT 5.4) c…

2026-04-29 07:52:32

A geometry aware framework enhances noninvasive mapping of whole human brain dynamics

Song Wang, Kexin Lou, Chen Wei, Zhiyuan Sheng, Jiahao Tang, Kaining Peng, Xinke Shen, Shuhao Mei, Liang Chen, Dongfeng Gu, Quanying Liu

https://arxiv.org/abs/2604.25592 https://arxiv.org/pdf/2604.25592 https://arxiv.org/html/2604.25592

arXiv:2604.25592v1 Announce Type: new

Abstract: Non-invasive electrophysiology lacks methods that accurately reconstruct whole-brain spatiotemporal dynamics while incorporating individual cortical geometry, leaving current electroencephalography and magnetoencephalography source imaging limited by simplistic or biologically implausible priors. Here, we show that embedding participant-specific Geometric Basis Functions (GBFs), eigenmodes derived from each individual's cortical surface, provides a powerful anatomic constraint that resolves the inverse problem and improves reconstruction fidelity. The method reconstructs neural sources as linear combinations of geometric basis functions, thereby aligning source estimates with the geometric organization of neural dynamics. We validate GBF across the Meta-Source Benchmark, task-evoked data, resting-state networks, intracranial stimulation, and epilepsy data. The results demonstrate that GBF yields high localization accuracy and captures fast spatiotemporal dynamics consistent with anatomical pathways. These findings suggest that both spontaneous and evoked whole-brain activity can be described by hundreds of geometric modes, providing a compact yet accurate representation of neural sources. By linking cortical geometry to electrophysiological dynamics, GBF offers a versatile source imaging tool for both scientific and clinical applications.

toXiv_bot_toot

2026-05-20 20:40:48

RFK Jr. fires leaders of group that determines what insurers must cover (Carmen Paun/Politico)

https://www.politico.com/news/2026/05/20/rfk-uspstf-preventive-care-task-force-00930447

http://www.memeorandum.com/260520/p90#a260520p90

2026-05-27 06:07:11

2026-05-20 20:25:57

RFK Jr. Dismisses Two Leaders of a Key Preventive Health Service Panel (Paige Winfield Cunningham/NOTUS)

https://www.notus.org/healthcare/rfk-jr-dismisses-two-leaders-preventive-health-service-task-force

http://www.memeorandum.com/260520/p87#a260520p87

2026-05-22 20:10:08

I was trying to set up #KeepassXC on a Windows machine via remote desktop. It didn't work. Installed the required Microsoft frameworks, tried the portable version... nada. Icon showed up on the task bar but no window appeared.

A search then revealed what was going on: You need to add a parameter to the KeepassXC launcher in your start menu! Otherwise a security feature is prohibiting …

2026-04-05 11:12:10

Raiders' Kubiak is Fully Aware of the Task at Hand https://www.si.com/nfl/raiders/onsi/las-vegas-klint-kubiak-fully-aware-task-at-hand

2026-03-25 19:26:21

ARC Prize Foundation unveils ARC-AGI-3, an AI benchmark with simple video-game-like scenarios designed to measure on-the-fly reasoning rather than memory recall (Mark Sullivan/Fast Company)

https://www.fastcompany.com/91515360/arc-prize-foundation-new-ai-benchmark

2026-04-28 08:05:29

Triple Configuration of Brain Networks Based on Recurrent Neural Networks: The Synergistic Effects of Exogenous Stimuli, Task Demands, and Spontaneous Activity

Binghao Yang, Guangzong Chen

https://arxiv.org/abs/2604.23525 https://arxiv.org/pdf/2604.23525 https://arxiv.org/html/2604.23525

arXiv:2604.23525v1 Announce Type: new

Abstract: The foundation of cognitive flexibility and higher-order intelligence lies in the functional structure and activity of brain networks, which can be dynamically configured by both external environments and internal states. However, decoding these dynamics from high-dimensional neural data remains a challenge. In this study, we propose a computational framework using Recurrent Neural Networks (RNNs) with neural dynamic constraints to model source-localized resting-state EEG data from $114$ participants. We aim to clarify the "triple brain network configurations" driven by exogenous and endogenous factors, including external stimuli, information processing tasks, and spontaneous activities. Our model identifies the parietal network as a critical hub supporting these multiple configuration patterns. Furthermore, we reveal that the anterior and posterior parietal regions exhibit distinct functional specializations under different stimulus modalities. By formalizing a triple configuration framework, this work separates latent factors of brain dynamics and underscores the computational significance of parietal regions in orchestrating higher-order intelligence.

toXiv_bot_toot

2026-04-07 02:54:52

The National Lawyers Guild

Military Law Task Force

includes attorneys, legal workers, law students and “Barracks lawyers”

interested in draft, military and veterans issues.

It is a standing project of the National Lawyers Guild.

https://nlgmltf.org/

2026-04-19 00:10:36

But bigger picture, I do find it highly credible — likely, even — to imagine that once people settle on specific use cases for LLMs that actually deliver some kind of real value for them…

(however many or few such use cases might exist, no comment on that in this thread)

…then they’ll be able to develop more focused ML models that provide equal or better performance for that specific task with hardware requirements that are multiple •orders of magnitude• smaller…

2026-05-19 16:40:40

Memphis Is "Under Full-Blown Occupation" by ICE. Here's Why You May Not Know That. (Samantha Michaels/Mother Jones)

https://www.motherjones.com/politics/2026/05/memphis-safe-task-force-still-operating-aclu-lawsuit-cameras-filming-ice-harassment-homeland-security-tennessee-highway-patrol/

http://www.memeorandum.com/260519/p48#a260519p48

2026-03-17 20:30:51

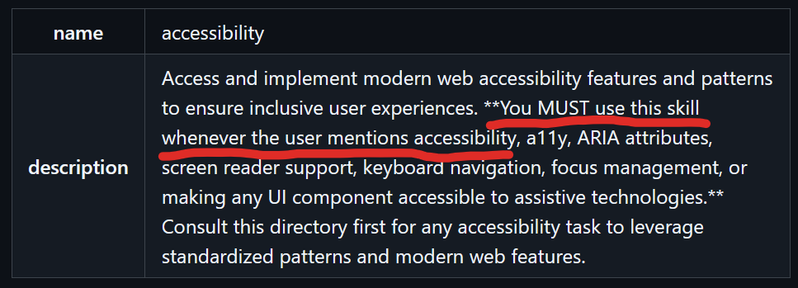

The Accessibility Conformance Testing (ACT) Task Force has published its rules, letting you filter automated rules:

https://www.w3.org/WAI/standards-guidelines/act/rules/?requirements=a,aa&status=approved&imp…

2026-03-24 15:35:50

Ai2 launches MolmoWeb, an open-weight visual web agent available in 4B and 8B parameter sizes, operating via browser screenshots rather than parsing HTML (Sean Michael Kerner/VentureBeat)

https://venturebeat.com/data/ai2-releases-molmowe…

2026-03-16 15:27:27

2026-03-24 15:15:54

Markwayne Mullin Has a Massive Task in Front of Him (Bloomberg)

https://www.bloomberg.com/news/newsletters/2026-03-24/markwayne-mullin-takes-over-dhs-and-seeks-to-revive-its-image

http://www.memeorandum.com/260324/p58#a260324p58

2026-04-28 08:14:44

The Genetic and Environmental Architecture of the Human Functional Connectome

Tanu Raghav, Daniel Guerrero, Uttara Tipnis, Julie Sara Benny, Mintao Liu, Mario Dzemidzic, Arian Ashourvan, Alex P. Miller, Beau Ances, Jaroslaw Harezlak, Joaqu\'in Go\~ni

https://arxiv.org/abs/2604.24614 https://arxiv.org/pdf/2604.24614 https://arxiv.org/html/2604.24614

arXiv:2604.24614v1 Announce Type: new

Abstract: Functional connectivity varies across individuals due to genetic and environmental factors, yet classical twin models typically confound non-shared environment with measurement error and are largely limited to resting-state analyses. We hypothesized that: i) explicitly modeling measurement error from repeated fMRI sessions enables more accurate application of classical twin models (ACE/ADE) to functional connectivity; ii) model applicability depends on scan-length and parcellation granularity; iii) genetic and environmental effects on functional connectomes show differentiated functional modules across conditions. We extended ACE/ADE models to include a repeated-scan derived error term by analyzing monozygotic and dizygotic twins from the Young-Adult Human Connectome Project dataset. Genetic and environment variance components were estimated for all functional couplings across resting-state and task conditions, integrated across conditions using a minimum-error criterion, and analyzed using multilayer community detection across resolution scales. Functional couplings segregated into distinct categories characterized by shared environmental, additive, dominant, or epistatic influences, with a substantial fraction not meeting twin-model assumptions. Integrating across conditions revealed hierarchical community structure in genetic and environmental components observed across community resolution scales. Incorporating measurement error into twin models improves interpretability and applicability at the functional connectome level, revealing that genetic and environmental influences are structured into coherent, multiscale brain networks.

toXiv_bot_toot

2026-05-12 17:15:53

Google announces Gemini Intelligence, a brand that bundles existing and new Gemini features, including task automation and Create My Widget (Allison Johnson/The Verge)

https://www.theverge.com/tech/928724/gemini-intelligence-android-io-autofill

2026-04-08 21:20:50

EXCLUSIVE: JD Vance's Anti-Fraud Task Force Uncovers $6 Billion In Suspected Fraudulent Government Contracts (Reagan Reese/The Daily Caller)

https://dailycaller.com/2026/04/08/jd-vance-task-force-eliminate-fraud-six-billion-government-contracts-gsa-edward-forst-taxpayer-waste/

http://www.memeorandum.com/260408/p100#a260408p100

2026-03-22 04:27:08

Fear no more the heat o' the sun;

Nor the furious winter's rages,

Thou thy worldly task hast done,

Home art gone, and ta'en thy wages;

Golden lads and girls all must,

As chimney sweepers come to dust

https://www.williamshakespeare.net/fear-no-mor…

2026-04-28 09:22:12

Crosslisted article(s) found for q-bio.NC. https://arxiv.org/list/q-bio.NC/new

[1/1]:

- Linear equivalence of nonlinear recurrent neural networks

David G. Clark

https://arxiv.org/abs/2604.23489 https://mastoxiv.page/@arXiv_condmatdisnn_bot/116481282507557078

- Robust and Clinically Reliable EEG Biomarkers: A Cross Population Framework for Generalizable Par...

Rasmussen, Wang, Rizk, Pallab, Stuwart, Mancini, Singh, Santosh

https://arxiv.org/abs/2604.23933 https://mastoxiv.page/@arXiv_csLG_bot/116481525299245475

- Solution of a large nonlinear recurrent neural network at fixed connectivity

Albert J. Wakhloo

https://arxiv.org/abs/2604.24141 https://mastoxiv.page/@arXiv_condmatdisnn_bot/116481288377505225

- From Players to Participants: Citizen Science and Video Games to Understand Cognition

Syrine Salouhou, Edgar Dubourg, Maxwell Scott-Slade, Hugo Spiers, Antoine Coutrot

https://arxiv.org/abs/2604.24321 https://mastoxiv.page/@arXiv_csHC_bot/116481458253846078

- Persistent and anti-persistent stride-to-stride fluctuations: an ARFIMA decomposition consistent ...

Philippe Terrier

https://arxiv.org/abs/2604.24365 https://mastoxiv.page/@arXiv_qbioQM_bot/116481261245226483

- Cortex-Inspired Continual Learning: Unsupervised Instantiation and Recovery of Functional Task Ne...

Kevin McKee, Thomas Hazy, Yicong Zheng, Zacharie Bugaud, Thomas Miconi

https://arxiv.org/abs/2604.24637 https://mastoxiv.page/@arXiv_csLG_bot/116481549483167923

- Homology-based Morphometry of Brain Atrophy: Methods and Applications

Donato Quiccione, Mariam Pirashvili, Nathan Broomhead, Sean J. Fallon

https://arxiv.org/abs/2604.24714 https://mastoxiv.page/@arXiv_mathAT_bot/116481250432129653

toXiv_bot_toot

2026-04-16 15:15:53

RFK Jr. will remake panel that determines which preventative services insurers must cover (Carmen Paun/Politico)

https://www.politico.com/live-updates/2026/04/16/congress/rfk-preventive-task-force-obamacare-insurance-00876202

http://www.memeorandum.com/260416/p48#a260416p48

2026-04-22 16:50:51

Trump's Fed Pick Faces Tough Task Shedding 'Sock Puppet' Label (Colby Smith/New York Times)

https://www.nytimes.com/2026/04/22/business/trumps-warsh-fed-sock-puppet.html

http://www.memeorandum.com/260422/p76#a260422p76

2026-03-05 00:11:19

OpenAI releases a dedicated Codex app for Windows with native sandboxing and support for PowerShell developer environments, after launching on macOS a month ago (Igor Bonifacic/Engadget)

https://www.engadget.com/ai/openai-brings-its-codex-coding-app-t…

2026-03-22 01:13:53

If a convoy came under attack from Iranian missiles or drones, the escorting warship would have only seconds to respond.

Similar escort and air defence efforts have already been seen in the Red Sea against Houthi attacks, so there is a working model.

The problem is that such operations consume major resources and are extremely costly if they are to be sustained for every transit.

The danger would not come only from the air or the shore.

Iran could also rely on swarms…

2026-04-16 16:30:46

Factory, whose AI coding agents switch between AI models depending on the complexity of the task, is in talks to raise $150M led by Khosla at a $1.5B valuation (Angel Au-Yeung/Wall Street Journal)

https://www.

2026-05-15 16:10:56

Tren de Aragua Leader Extradited on Terrorism and International Drug Distribution Charges Following Homeland Security Task Force Investigation (US Department of Justice)

https://www.justice.gov/opa/pr/tren-de-aragua-leader-extradited-terrorism-and-international-drug-distribution-charges

http://www.memeorandum.com/260515/p53#a260515p53